A Practical Guide to Orchestration in Cloud Computing

Cloud orchestration is the process of automating the configuration, coordination, and management of interconnected systems and services in a cloud environment. It transforms a collection of individual automated tasks—like provisioning servers, configuring networks, and deploying applications—into a single, cohesive workflow.

Think of it as the central nervous system for your cloud operations. An orchestrator doesn’t perform every task itself; instead, it directs other automation tools and services, ensuring they execute in the correct sequence, handle dependencies, and respond to failures. This turns disparate cloud resources into a unified, functional system.

What Cloud Orchestration Actually Is

To grasp orchestration, it’s essential to distinguish it from automation. Automation executes a single, discrete task without human intervention. Examples include launching a virtual machine or running a daily backup script. Automation is tactical, efficient, and narrow in scope.

Orchestration is the strategic layer above automation. It strings together multiple automated tasks to create an end-to-end workflow. It manages the entire lifecycle of a complex service—from initial provisioning and dynamic scaling to self-healing and eventual decommissioning.

Why Orchestration is a Core Requirement

Modern IT environments are complex, hybrid ecosystems spanning public clouds, private data centers, and edge devices. Manual management of these distributed systems is no longer feasible; it’s a direct impediment to scale and reliability. As organizations increasingly rely on containerized microservices and complex data pipelines, a centralized orchestration strategy becomes non-negotiable.

This is particularly critical for data platform modernization. Effective orchestration is what enables complex AI and machine learning pipelines on platforms like Snowflake or Databricks to function reliably. For any CIO or data leader, orchestration isn’t a technical detail; it’s the engine of operational efficiency and scalable governance. To see how these components fit together, explore the architecture of the modern data stack in our article.

Orchestration moves beyond simple “if-then” logic. It introduces intelligence into workflows, enabling systems to make context-aware decisions based on environmental state, service dependencies, and defined business objectives.

The Business Impact of Effective Orchestration

A well-executed cloud orchestration strategy delivers measurable business value by addressing fundamental operational challenges. The goal isn’t just technology for its own sake; it’s about enabling speed, consistency, and resilience at scale.

Key organizational benefits include:

- Accelerated Innovation: Automating the deployment and management of complex applications allows development teams to release features faster and with significantly less risk.

- Operational Efficiency: Orchestration minimizes manual intervention, freeing engineers from routine maintenance to focus on higher-value problem-solving. It also drastically reduces human error, a leading cause of system outages.

- Robust Governance and Security: It enables the consistent enforcement of security, compliance, and configuration policies across all environments, ensuring resources are deployed correctly and securely every time.

- Optimized Resource Utilization: Orchestration tools can automatically scale resources up or down based on real-time demand, eliminating over-provisioning and reducing cloud spend.

Orchestration vs Choreography The Core Architectural Decision

When designing a distributed system, one of the most critical architectural decisions is how services will interact. This choice fundamentally comes down to two patterns: orchestration and choreography.

This decision has long-term implications for a system’s scalability, resilience, and maintainability.

Orchestration employs a central controller—the orchestrator—that explicitly directs the actions of each service. This is a top-down, command-and-control model where a single component manages the end-to-end workflow logic.

Choreography, in contrast, is a decentralized model. There is no central controller. Instead, services operate independently, publishing events about their state changes. Other services subscribe to these events and react accordingly, without any central coordination.

The Power of Centralized Control: Orchestration

The primary advantage of orchestration is its clarity and direct control. With business logic centralized in one place, the entire workflow is easier to understand, monitor, and debug.

This explicit control is critical for processes where the sequence of operations is non-negotiable.

Benefits of an orchestrated approach:

- Explicit Control: The orchestrator defines the exact sequence of operations, making the workflow predictable and deterministic.

- Simplified Monitoring: The state of the entire process can be observed from a single point. If a step fails, the point of failure is immediately identifiable.

- Transactional Integrity: It is well-suited for complex, long-running processes that must be treated as a single transaction, such as financial processing or provisioning a full infrastructure stack (database, network, servers).

Orchestration is the standard pattern for stateful data pipelines and Infrastructure as Code (IaC) deployments, where strict ordering and dependency management are paramount.

The Agility of Decentralized Events: Choreography

Where orchestration provides control, choreography offers superior resilience and scalability. By decoupling services, it eliminates single points of failure. If one service becomes unavailable, others can continue to operate and process its events once it recovers.

This loose coupling also empowers development teams to build and deploy their services independently, accelerating development cycles.

Choreography excels in systems where flexibility and resilience are top priorities. Each component is a self-contained unit that doesn’t need to know about the others, allowing the entire system to evolve and scale massively without hitting a central bottleneck.

This event-driven architecture is common in large-scale microservices and real-time platforms. In an e-commerce system, for instance, a “payment successful” event can be broadcast. The shipping, inventory, and notification services can all consume this event and perform their functions concurrently, without direct coordination.

Making the Right Architectural Choice

The choice between these patterns is a trade-off between control and flexibility. One is not inherently better; the decision depends entirely on the specific requirements of the use case.

To clarify the trade-offs, here is a direct comparison.

Orchestration vs Choreography A Practical Comparison

| Criterion | Orchestration (The Conductor) | Choreography (The Dancers) |

|---|---|---|

| Control Flow | Centralized, explicit commands from a single point. | Decentralized, services react to events from others. |

| Coupling | Tightly coupled. Services are aware of the central orchestrator. | Loosely coupled. Services are independent and unaware of each other. |

| Scalability | Can be limited by the central orchestrator, which may become a bottleneck. | Highly scalable, as there is no central point of failure or control. |

| Resilience | A failure in the orchestrator can halt the entire workflow. | More resilient; failure of one service does not stop others from functioning. |

| Complexity | Simpler to understand and debug the overall workflow logic. | Can be harder to monitor and debug end-to-end processes across services. |

| Ideal Use Cases | Stateful data pipelines, financial transactions, infrastructure provisioning (IaC). | Large-scale microservices, IoT platforms, real-time event processing. |

In practice, many robust systems employ a hybrid approach. An orchestration tool might manage a critical, sequential data pipeline, while the microservices within that pipeline communicate with each other using choreographed events.

Understanding the fundamental differences in orchestration cloud computing is essential for designing systems that are not only functional today but also scalable, resilient, and adaptable for future business requirements.

Where Orchestration Delivers Practical Value

The true value of cloud orchestration becomes clear when it solves complex, real-world business problems. Organizations adopt it not for technological novelty, but for tangible gains in speed, reliability, and operational stability.

Market data validates this trend. The global cloud orchestration market is projected to reach US$22.3 billion in 2025 and grow to US$74.1 billion by 2032, reflecting a compound annual growth rate of 18.6%. This growth is a direct response to the need for automating complex workflows in hybrid and multi-cloud environments.

For a data leader managing a platform migration to Snowflake or Databricks, orchestration is the backbone of the project. It provides the necessary structure to prevent the intricate data pipelines that power modern analytics and AI from becoming unmanageable.

Use Case 1: Automated Application Deployment

A primary application of orchestration is within the CI/CD (Continuous Integration/Continuous Deployment) pipeline. Manual deployment of microservices-based applications is slow, error-prone, and a significant bottleneck to innovation.

Orchestration tools like Kubernetes, often combined with GitOps tools like Argo CD, automate this entire workflow.

- Code-to-Cluster Automation: When a developer pushes code, the orchestrator initiates a sequence: building a container, executing automated tests, and deploying the new version to a staging environment with zero manual intervention.

- Intelligent Rollout Strategies: Orchestration enables advanced deployment patterns like canary releases. A new version is deployed to a small subset of users, and the orchestrator monitors performance metrics. If stable, the rollout proceeds; if not, it’s halted.

- Automated Rollbacks: If a deployment introduces issues like increased error rates or latency, the system automatically rolls back to the last known stable version, minimizing downtime and business impact.

This level of automation fundamentally transforms application delivery by eliminating manual handoffs, enforcing consistency, and enabling teams to deploy features with greater velocity and confidence.

Use Case 2: Managing Complex Data Pipelines

Modern data stacks, particularly those supporting AI and machine learning, depend on complex sequences of operations: data ingestion, cleaning, transformation, loading into a data warehouse, model training, and serving. A failure at any step can compromise the entire pipeline.

Workflow orchestrators like Apache Airflow act as the central control plane for these data operations.

A data orchestrator functions as the project manager for data workflows. It doesn’t perform the data processing itself but ensures every task—from ingestion scripts to model training jobs—executes in the correct order, with proper dependency management and error handling.

For example, a model training job cannot begin until its upstream data transformation tasks have successfully completed and been validated. An orchestrator enforces this dependency, automatically retrying failed tasks before alerting a data engineer. This discipline is essential for building reliable, production-grade data pipelines. For a deeper analysis, see our guide on data orchestration platforms.

Use Case 3: Automated Infrastructure Provisioning

A foundational use case for orchestration is Infrastructure as Code (IaC). Using tools like Terraform, teams define their entire cloud infrastructure—servers, databases, networks, firewalls—in declarative configuration files. The orchestration engine interprets these files and provisions the environment exactly as specified, across any cloud provider.

This solves several persistent operational challenges:

- Consistency: It eliminates configuration drift by ensuring that development, staging, and production environments are identical, reproducible clones.

- Security and Governance: Security rules and compliance policies are codified within the infrastructure definitions, ensuring that all resources are provisioned securely by default.

- Disaster Recovery: In the event of a regional outage, the orchestrator can use the same code to rebuild the entire infrastructure stack in a different region in minutes, turning a major crisis into a routine, automated procedure.

By treating infrastructure as code, orchestration transforms a slow, manual, and error-prone process into a fast, repeatable, and secure workflow.

Comparing Top Orchestration Technologies

Selecting an orchestration tool requires a clear understanding of the distinct layers within a modern tech stack. There is no single “best” tool; the most effective solutions are specialized for specific jobs. Using a tool designed for infrastructure provisioning to manage a data pipeline is a common anti-pattern that leads to unnecessary complexity.

The cloud orchestration market is projected to grow from USD 19.49 billion in 2025 to USD 132.36 billion by 2035, driven by the imperative to automate containerized applications and microservices. While enterprises have led adoption, smaller firms are now leveraging managed cloud services to implement orchestration without significant upfront investment. You can find detailed analysis in this full market research report.

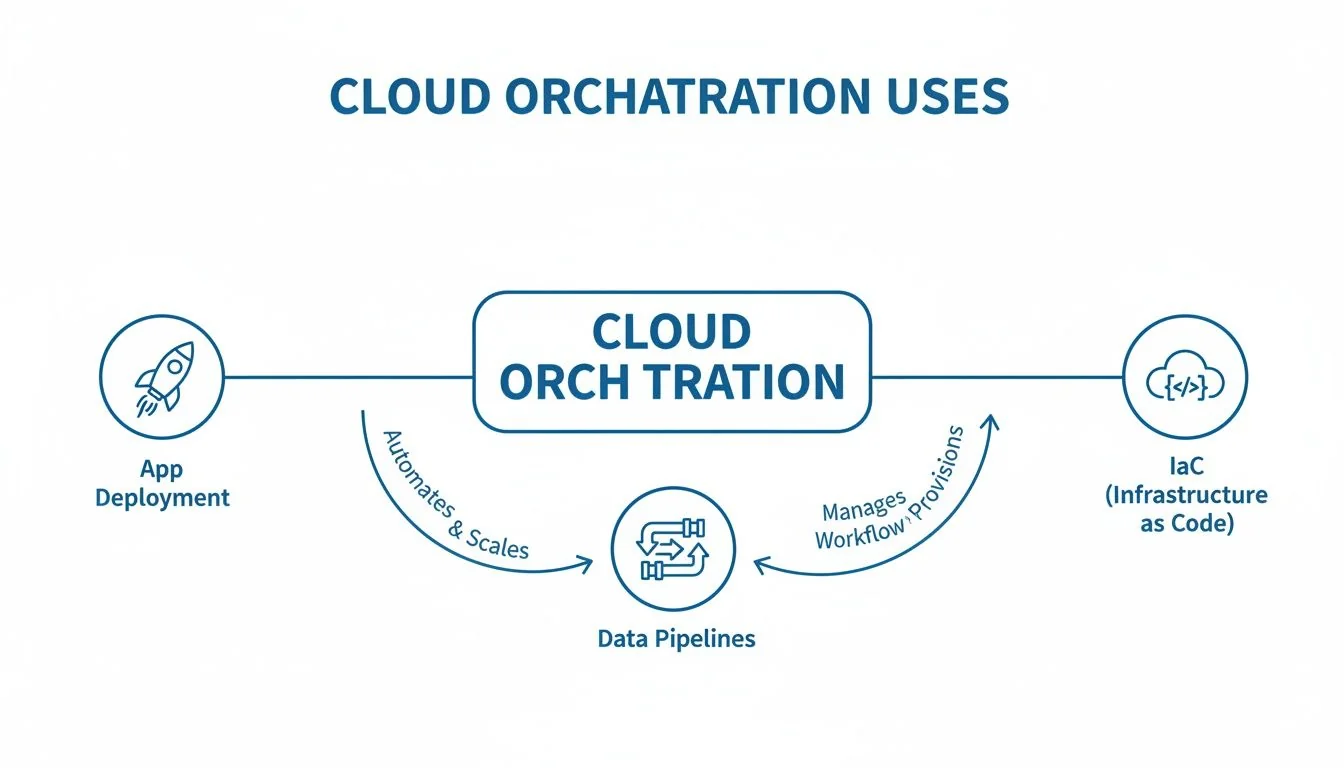

This diagram illustrates how orchestration acts as a coordinating layer across different domains.

Orchestration is not a monolithic concept. It is a coordinating force applied to distinct layers: application, data, and infrastructure. Each layer is best served by specialized tools.

Infrastructure Orchestration: The Foundation Builders

This layer is concerned with provisioning core infrastructure: virtual machines, networks, storage, and databases. This is the domain of Infrastructure as Code (IaC), dominated by tools like Terraform and cloud-native services like AWS CloudFormation.

- Terraform: The de facto standard for multi-cloud and hybrid-cloud infrastructure management. Terraform’s key strength is its provider-agnostic approach, allowing teams to use a single, consistent language to define infrastructure on AWS, Azure, GCP, and other platforms. This is critical for organizations seeking to avoid vendor lock-in.

- Cloud-Native Tools (e.g., AWS CloudFormation): For organizations committed to a single cloud provider, native tools offer deep integration with the platform’s services. The primary trade-off is portability; infrastructure defined in CloudFormation is not easily transferable to another cloud.

The function of these tools is to provision and manage the lifecycle of infrastructure resources. They are not designed to handle the complex, stateful logic of application or data workflows.

Container Orchestration: The Application Managers

Once infrastructure is provisioned, applications must be deployed and managed. Today, this predominantly means containers. Container orchestration platforms automate the deployment, scaling, networking, and lifecycle management of containerized applications.

Kubernetes is the undisputed industry standard, functioning as the operating system for the cloud. It manages critical tasks like service discovery, load balancing, and self-healing of containers.

In practice, most organizations use managed Kubernetes services to abstract away the complexity of managing the control plane:

- Amazon Elastic Kubernetes Service (EKS)

- Azure Kubernetes Service (AKS)

- Google Kubernetes Engine (GKE)

These services allow engineering teams to focus on application logic rather than cluster administration. Kubernetes excels at managing the application runtime but does not provision the underlying infrastructure it runs on; that remains the job of an IaC tool.

Workflow Orchestration: The Logic Coordinators

This layer orchestrates business logic. These tools are concerned with tasks, dependencies, retries, and sequencing. They are purpose-built for managing multi-step processes like data pipelines, machine learning workflows, or complex business transactions.

- Apache Airflow: The open-source standard for data engineering. Airflow allows users to define complex data workflows as Python code (Directed Acyclic Graphs, or DAGs), providing fine-grained control over scheduling, dependency management, and monitoring for ETL/ELT processes.

- Managed Services (e.g., AWS Step Functions): Cloud providers offer managed workflow orchestrators designed to coordinate microservices and serverless functions. AWS Step Functions is effective for application logic but may be less flexible and more expensive for the long-running, complex data processing jobs where Airflow excels.

These tools are essential for ensuring that business-critical sequences execute reliably and in the correct order.

GitOps Orchestration: The Delivery Experts

GitOps is a modern paradigm for continuous delivery. The core principle is that a Git repository is the single source of truth for the desired state of the application and infrastructure. Tools like Argo CD and Flux operate within a Kubernetes cluster and continuously monitor a Git repository.

When a change is merged into the repository, the GitOps tool automatically detects the divergence and applies the necessary changes to the cluster to reconcile its state with the repository. This creates a fully automated, auditable, and easily revertible deployment pipeline. Argo CD’s specific function is to ensure the production environment perfectly mirrors the state defined in Git.

A Practical Checklist for Selecting Your Orchestration Tool

Selecting orchestration technology is a high-impact decision that influences operational efficiency, scalability, and long-term cloud strategy. A superficial, feature-based comparison often leads to technical debt and costly rework.

This checklist provides a structured framework for a rigorous evaluation process. Use these points to design proofs-of-concept (POCs) and cut through marketing claims with real-world testing.

Scalability and Performance

A tool that performs well in a small-scale demo may fail under production load. The objective is to identify performance bottlenecks before the tool is integrated into critical systems.

Stress-test the tool with specific metrics:

- Workload Simulation: Can the tool handle 10,000 concurrent tasks or provision 500 nodes simultaneously without performance degradation? Demand a POC that simulates your expected peak load.

- Latency Under Load: Measure the end-to-end latency for a complex workflow during peak load. Do not rely on vendor benchmarks.

- Resource Footprint: What are the CPU and memory requirements of the orchestrator itself under your projected workload? This directly impacts your cloud bill.

Cost and Total Cost of Ownership

The license fee is only one component of the Total Cost of Ownership (TCO). A comprehensive analysis must include infrastructure costs, operational overhead, and the cost of acquiring and retaining specialized talent required to operate the platform.

An effective cost analysis moves beyond licensing fees. It must account for the “people cost”—the training, hiring, and retention of engineers with the niche skills required to operate the platform effectively.

To uncover hidden costs, ask vendors:

- What is the pricing model (per-user, per-node, consumption-based), and how does it scale as our usage grows by 10x?

- What level of engineering expertise is required for day-to-day management, maintenance, and upgrades? Assess this against your team’s current skill set.

- Can you provide a reference case study detailing the TCO for an organization at our scale?

The cloud orchestration market reached USD 23.2 billion in 2024 and is projected to hit USD 84.8 billion by 2033, with a 15.5% CAGR. This growth is driven by the shift to hybrid cloud and microservices, where automation across SaaS, PaaS, and IaaS is mandatory. You can explore this cloud orchestration market trend for more details.

Vendor Lock-in and Portability

Choosing an orchestrator is a significant commitment. It is crucial to avoid proprietary ecosystems that make it difficult or expensive to migrate to a different tool or cloud provider in the future.

Evaluate your exit strategy from the beginning:

- Migration Path: What is the process for exporting workflows from the platform? Does it support open standards for workflow definitions (e.g., YAML) or provide a clean export mechanism?

- Cloud Agnosticism: Is the tool truly multi-cloud, or are certain features optimized for a specific provider like AWS, Azure, or GCP?

- Open-Source Core: Is the tool built on a healthy, active open-source project? An open-source foundation can mitigate vendor lock-in and provides access to a larger talent pool.

Security and Compliance

An orchestrator has privileged access to your entire infrastructure, making it a high-value target. Its capabilities for secrets management, policy enforcement, and auditability are critical.

Demand concrete evidence of its security posture:

- Secrets Management: How does the tool integrate with your existing secrets manager (e.g., HashiCorp Vault, AWS Secrets Manager) to handle credentials securely?

- Policy as Code: How does the solution enforce compliance rules—such as ensuring all storage buckets are encrypted—before resources are provisioned?

- Audit Trail: Can the tool provide a comprehensive, end-to-end audit trail that traces a single action from user request to the final API call for compliance purposes?

Observability and Integration

An orchestration tool must integrate seamlessly with your existing monitoring, logging, and alerting systems. Poor integration creates operational blind spots and complicates troubleshooting. To see how different tools approach this, a detailed ETL tools comparison can offer valuable insights.

Assess its compatibility with your ecosystem:

- Monitoring Stack: Does it offer out-of-the-box integrations for your primary observability platforms, such as Datadog, Grafana, or Prometheus?

- API Extensibility: How robust and well-documented is its API? Can your platform teams build custom tools and automations on top of it?

- Ecosystem Support: How extensive is the library of pre-built integrations for other tools in your stack, from CI/CD systems to data platforms?

Your Cloud Orchestration Questions Answered

Here are answers to common questions that technical leaders face when developing an orchestration strategy.

What’s the Real Difference Between Automation and Orchestration?

While often used interchangeably, these terms represent distinct concepts. Understanding the difference is crucial for a scalable cloud strategy.

Automation refers to the execution of a single, discrete task without human intervention. Examples include provisioning a VM or running a database backup. It is tactical and focused on a specific action.

Orchestration is the coordination of multiple automated tasks into a coherent, end-to-end workflow. It manages dependencies, sequencing, and error handling across tasks to achieve a larger business outcome.

Automation is the what—“create this server.” Orchestration is the how, when, and why—“create the server, wait for it to be ready, then install the database, and only then, deploy the code.”

Can One Tool Really Do All Kinds of Orchestration?

No. The notion of a single tool for all orchestration needs is a misconception. The ecosystem is specialized because different layers of the tech stack have different requirements. Attempting to use a single tool for everything typically results in a fragile, overly complex system.

A more effective strategy is to assemble a “team” of best-of-breed tools, each specialized for its domain. A modern stack often includes:

- Infrastructure: A tool like Terraform for provisioning foundational cloud resources.

- Containers: Kubernetes (via a managed service like EKS or GKE) for managing the lifecycle of containerized applications.

- Workflows: A system like Apache Airflow for managing complex business logic and dependencies in data pipelines.

The goal is not to find a single tool but to integrate these specialized solutions into a cohesive system.

How Does Orchestration Actually Lower My Cloud Bill?

Effective orchestration is a direct mechanism for controlling cloud spend. It shifts cost management from a reactive, end-of-month review to a proactive, automated discipline. By codifying cost-control rules, you can eliminate the manual errors and orphaned resources that lead to budget overruns.

Orchestration reduces costs in three primary ways:

- Intelligent Scaling: It automatically scales resources up to meet demand and, critically, scales them down when they are no longer needed, eliminating payment for idle compute.

- Environment Lifecycle Management: It automates the provisioning and decommissioning of temporary environments for development, testing, and QA, preventing “zombie” resources from being left running.

- Policy Enforcement: It enforces cost-control policies at the point of provisioning. You can mandate specific instance types or require cost-allocation tags, and the orchestration tool ensures compliance.

This automated governance is the most effective defense against the resource sprawl that inflates cloud bills.

Selecting the right partners is as critical as choosing the right tools. DataEngineeringCompanies.com provides data-driven rankings and practical resources to help you find and evaluate top-tier data engineering consultancies, ensuring your modernization and AI initiatives are built on a foundation of expertise. Learn more at DataEngineeringCompanies.com.

Data-driven market researcher with 20+ years in market research and 10+ years helping software agencies and IT organizations make evidence-based decisions. Former market research analyst at Aviva Investors and Credit Suisse.

Previously: Aviva Investors · Credit Suisse · Brainhub · 100Signals

Top Data Engineering Partners

Vetted experts who can help you implement what you just read.

Related Analysis

Orchestration in Cloud: A Practical Guide for Modern Data Platforms

Discover how orchestration in cloud streamlines modern data platforms. Learn key concepts, tools, and best practices for scalable, reliable operations.

A Leader's Guide to Apache Spark Optimization: Moving Beyond Quick Fixes

Unlock performance with apache spark optimization strategies for faster jobs, smarter tuning, and cost savings across your data platform.

Data Contracts in Data Engineering: A Guide for Engineering Leaders

Explore data contracts in data engineering to enforce agreements, prevent pipeline failures, and boost data reliability across Snowflake and Databricks.