A Pragmatic Guide to Data Engineering Project Management

Managing a data engineering project is fundamentally an exercise in risk mitigation and value delivery. It is not about elegant code or trendy platforms; it is about the disciplined execution that separates a valuable data asset from a costly, over-budget failure. When a data engineering project misses deadlines or exceeds its budget, the root cause is almost always a failure in project management, not a technical limitation. For engineering leaders managing these initiatives, a structured approach is non-negotiable.

Why Data Engineering Projects Fail: An Evidence-Based View

A significant number of data engineering projects fail to deliver on their initial promise. This failure is rarely due to a lack of engineering talent. Elite teams using powerful platforms like Snowflake or Databricks are frequently derailed by predictable, non-technical issues.

The primary problem is a persistent disconnect between engineering execution and specific business outcomes. This gap triggers a cascade of issues, from scope creep to stakeholder disengagement. The consequences are measurable: only 38% of organizations consistently complete projects on time, and just 41% stay within their original budget.

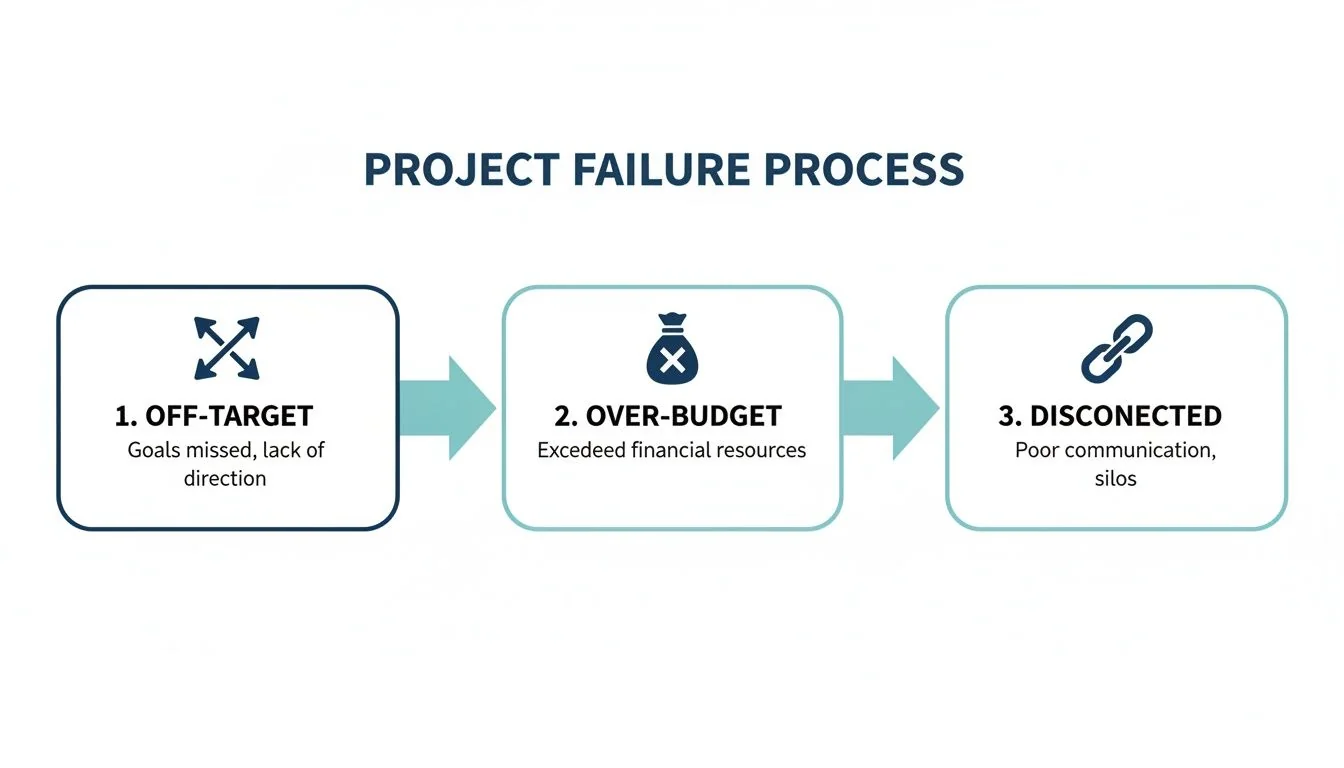

Anatomy of Failure: The Three Core Breakdowns

Data project failure follows a consistent pattern. These are the most common breakdowns engineering leaders must prevent.

- Unrealistic or Vague Scope: Projects initiated with ambiguous goals (“modernize our analytics”) are destined for failure. Without a quantifiable, bounded scope, requirements will shift continuously, resulting in rework and budget overruns. Managing this requires a rigorous process; learning how to prevent scope creep and protect project profitability is a core competency for any project lead.

- Mismanaged Consulting Engagements: Engaging an external firm without disciplined oversight introduces significant risk. Ambiguous Statements of Work (SOWs), inconsistent communication, and weak governance lead directly to misaligned priorities and uncontrolled budget expansion.

- Focus on Technology Over Business Value: Technical teams can become fixated on building a “perfect” or “future-proof” architecture. Stakeholders, however, measure success by tangible business results. A project that runs for months without delivering measurable value will lose executive support.

According to DataEngineeringCompanies.com’s analysis of 86 data engineering firms, projects with ambiguous success criteria at kickoff are 50% more likely to exceed their budget by at least 25%. This data directly correlates a lack of initial alignment with significant financial risk.

Common Failure Points in Data Engineering Projects and Their Solutions

These issues consistently derail data initiatives. This framework helps leaders identify and mitigate them proactively.

| Failure Point | Leading Indicator | Actionable Mitigation Strategy |

|---|---|---|

| Vague Business Requirements | Stakeholders use fuzzy terms like “better insights” or “modernize our data.” | Insist on quantifiable KPIs before any work begins. Translate business goals into specific data outcomes (e.g., “reduce report generation time from 4 hours to 15 minutes”). |

| Lack of Stakeholder Buy-In | Key business users repeatedly miss planning meetings or are slow to provide feedback. | Establish a formal project steering committee with defined roles and responsibilities. Schedule mandatory bi-weekly demos to maintain engagement and gather feedback. |

| Poor Source Data Quality | Engineers discover source data is incomplete, inconsistent, or undocumented mid-project. | Mandate a dedicated data audit and profiling phase during project discovery. Allocate 15-20% of the initial engineering timeline specifically for data cleansing and preparation. |

| ”Big Bang” Delivery Model | The project plan outlines a single, monolithic deployment after 6-9 months of development. | Re-architect the project plan for agile, milestone-based delivery. Mandate the delivery of a usable, high-value data product every 4-6 weeks to build momentum and demonstrate value. |

| Technology-Driven Development | The team spends the first month debating tools (e.g., Airflow vs. Dagster) instead of user needs. | Freeze all technology decisions until the business requirements and core use cases are documented and signed off. The problem must define the tool, not the other way around. |

Recognizing these patterns is the first step. A structured project management framework that prioritizes quantifiable goals, incremental delivery, and continuous communication turns high-risk data initiatives into predictable, value-driven successes.

The Five-Phase Lifecycle for Data Platform Builds

Monolithic, “big bang” data platform projects are a direct path to failure. Building a modern data platform requires an iterative methodology that delivers business value quickly and adapts to new information. The most successful data engineering initiatives follow a structured, five-phase lifecycle specifically designed for cloud-native tools like Snowflake or Databricks.

The purpose of this framework is to prevent the common failure sequence of data projects.

This outcome is avoidable by structuring work into gated phases. Each gate forces a critical alignment decision, ensuring the project does not proceed with a fundamental flaw.

A Battle-Tested Five-Phase Framework

This roadmap keeps technical teams focused on business outcomes, manages scope, and delivers value in predictable increments.

Phase 1: Discovery & Scoping (Weeks 1-4)

This phase translates vague business requests into a concrete, prioritized backlog. No code is written.

- Key Activities: Conduct stakeholder interviews to define specific business questions. Sketch a high-level entity-relationship diagram (ERD). Draft a Success Criteria Document defining quantifiable outcomes (e.g., “reduce customer churn analysis time from 3 days to 2 hours”).

- The Gate: This phase is not complete until business sponsors formally sign off on the prioritized use cases and their associated KPIs. This is the primary defense against building a platform no one uses.

Phase 2: Architectural Design (Weeks 5-6)

With a clear “what,” the team defines the “how.” This is where defensible technology choices are made based on the defined use cases, not trends.

- Key Deliverables: Produce a detailed technical design document specifying data flows, modeling layers, and CI/CD strategy. A mandatory component is a total cost of ownership (TCO) analysis comparing at least two viable platform options (e.g., Snowflake vs. Databricks) based on projected workloads and data volume.

- The Gate: The project does not proceed until the TCO is approved and the architectural design is signed off by the lead architect or VP of Engineering.

Phase 3: Iterative Build (Sprints, Weeks 7-20)

The project shifts into an agile execution rhythm. The team works in short sprints (typically two weeks) to build, test, and deliver functional components of the platform.

- Typical Cycle: Ingest a new data source, apply business logic with a tool like dbt, and expose the curated data mart for analyst use. Each cycle must produce a testable, usable output.

- The Gate: Each sprint concludes with a demo to stakeholders. The sprint is not “done” until the acceptance criteria for its user stories are met.

Phase 4: User Acceptance & Deployment (Weeks 21-22)

Moving from development to a stable production environment requires a dedicated process. This is about building a reliable, automated pipeline for code promotion.

- Core Activities: Configure CI/CD pipelines to automate testing and deployment. Conduct formal User Acceptance Testing (UAT) with business users. Finalize operational runbooks and on-call schedules.

- The Gate: The platform is not “live” until UAT is passed and the operations team formally accepts the handover documentation.

Phase 5: Optimization & Governance (Ongoing)

The project is not “done” at launch. It transitions into a continuous loop of monitoring, cost management, and enhancement based on real-world usage.

- Ongoing Tasks: Implement automated alerts for query performance degradation and budget anomalies. Fine-tune cloud spend based on usage patterns. Evolve data models to support new business requirements. This ongoing iteration is the core of effective data engineering project management.

How to Select and Manage a Data Engineering Consultancy

Selecting a consulting partner is the highest-leverage decision an engineering leader makes on a data project. The right firm accelerates your roadmap by years; the wrong one consumes budget and delivers technical debt. The objective is to penetrate sales pitches and identify partners with proven, relevant expertise.

The evaluation process starts with a technically rigorous Request for Proposal (RFP). A well-structured RFP functions as a technical screen. Based on our analysis of 86 data engineering firms, the top-tier partners differentiate themselves not on price, but on their ability to present a clear, milestone-driven plan that directly addresses the business problem with a credible technical solution.

Structuring a Technically Rigorous RFP

Your RFP is a technical interview for an entire company. It must force prospective partners to demonstrate expertise, not just claim it.

- Technology-Specific Scenarios: Do not ask, “Do you have experience with Airflow?” Instead, provide a scenario: “Describe how you would design an idempotent and backfill-capable data pipeline using Airflow to process 1TB of daily event data from S3. What specific operators would you use and how would you manage state?”

- Architectural Trade-offs: Force a defensible position. For example: “Given our requirement for sub-second query latency on 10TB of nested JSON data, argue for either Snowflake or Databricks with Delta Lake. Your justification must include a TCO comparison for a 12-month period, factoring in compute, storage, and egress costs.”

- dbt Best Practices: Test their modeling discipline. Ask: “Provide a sample

dbt_project.ymland directory structure for a multi-layered data model (staging, intermediate, marts). How do you enforce data quality tests and documentation standards within this framework?”

From Contract to Kickoff: Establishing Governance

Once a partner is selected, the focus must shift immediately to governance. An ambiguous Statement of Work (SOW) is an invitation for scope creep. The SOW must be airtight, with explicit deliverables, timelines, and quantitative acceptance criteria for each project phase.

From day one, establish a firm communication rhythm:

- Weekly Technical Syncs: For the core project team to resolve technical blockers and review progress at a granular level.

- Bi-Weekly Steering Committee: For executive sponsors and consultancy leadership to review budget vs. actuals, track major milestones, and ensure continued alignment with business goals.

This dual-track governance model maintains both tactical momentum and strategic alignment, ensuring the selected partner delivers on their promises.

Measuring Success: KPIs for Data Engineering Projects

Vanity metrics like “tasks completed” are insufficient for managing data engineering projects. Leaders require unambiguous signals on project velocity, financial health, and business impact. A multi-faceted measurement framework is essential.

Engineering Velocity and Predictability

These metrics measure the engineering team’s throughput and process efficiency.

- Sprint Velocity (Predictability over Volume): The goal is not the highest number of story points, but a stable, predictable velocity. Consistency indicates the team has hit a sustainable pace, allowing for reliable forecasting of delivery dates.

- Cycle Time: This measures the wall-clock time from when a task begins to when it is deployed to production. A decreasing cycle time is a strong indicator of improving process efficiency and bottleneck removal.

Financial Discipline and Cloud Cost Control

Financial oversight is non-negotiable.

- Budget vs. Actual: This must be tracked monthly, comparing planned spend for both consulting services and cloud platforms (AWS, GCP, Azure) against actual consumption.

- Cloud Cost per Environment: Total cloud spend is an incomplete metric. Break down costs by environment (dev, test, prod) to identify waste, such as oversized or idle development resources.

Business Value and ROI

This is the ultimate measure of project success and justifies future investment.

- Data Uptime & SLA Adherence: Track the percentage of time critical datasets are available and meet quality standards. If you promise the finance team 99.95% uptime for their end-of-quarter reporting data, this metric proves you are delivering.

- Query Performance Improvement: This provides a tangible metric of success. Example: “The new data model reduced the P95 query time for the executive sales dashboard from 90 seconds to 5 seconds.” This is a clear win to report to stakeholders.

According to DataEngineeringCompanies.com’s analysis of 86 firms, projects that implement proactive cloud cost controls from the initial design phase are 30% less likely to exceed their budget than those that attempt to optimize costs post-launch.

A Framework for Cloud Cost Control

Runaway cloud spend can derail a project. An effective cost control strategy is multi-layered.

First, implement a mandatory resource tagging policy. Every resource must be tagged with project, environment, and owner. This is non-negotiable and provides immediate visibility for cost allocation. Couple this with automated budget alerts that notify stakeholders when spending crosses 75% of the monthly forecast.

Second, integrate cost control into the architecture. When using a platform like Snowflake, this means selecting the correct virtual warehouse size for each workload (do not default to Large) and setting aggressive auto-suspend policies (e.g., 60 seconds). These are not suggestions; they are fundamental budget controls. For a detailed guide, review our proven techniques for Snowflake cost optimization. Proactive management prevents budget-breaking invoices.

Integrating Risk Management and Governance

Data pipelines handle a company’s most critical assets. Treating risk management as a post-launch activity is a significant liability. Effective data engineering project management involves identifying threats before they materialize and establishing clear ownership from day one.

Begin with a living risk register—a practical document tracking real-world threats. The most common risks for data platform projects are predictable:

- Data Quality Degradation: The “garbage in, garbage out” problem, amplified at scale.

- Security Vulnerabilities: Misconfigured IAM roles, unencrypted data in transit, or insecure API endpoints.

- Vendor Lock-In: Over-reliance on a single proprietary tool, limiting future architectural flexibility.

- Scope Creep: The accumulation of “small” stakeholder requests that derail timelines and inflate budgets.

From Risk Identification to Mitigation

For each identified risk, a specific mitigation strategy is required. This involves implementing technical and procedural guardrails.

| Risk | Mitigation Strategy |

|---|---|

| Data Quality Degradation | Implement data contracts at ingestion points. Use dbt to run automated data quality tests (freshness, uniqueness, referential integrity) on every pipeline run. |

| Security Vulnerabilities | Mandate peer review for all Infrastructure-as-Code (IaC) changes. Run automated security scans (e.g., Trivy, tfsec) within the CI/CD pipeline. Enforce principle of least privilege for all database roles. |

| Vendor Lock-In | Prioritize tools with open standards and APIs (e.g., SQL, Parquet). Isolate vendor-specific logic behind an abstraction layer to simplify future migration. |

| Scope Creep | Institute a formal change control process. All new requests must be evaluated for business value, cost, and timeline impact before being approved by the steering committee. |

Our analysis of 86 data engineering firms shows that projects with a documented risk register and pre-defined mitigation plans are 40% less likely to experience a critical production incident in the first six months post-launch.

A Lightweight, Effective Governance Model

Governance should provide clarity, not bureaucracy. An effective framework defines accountability.

- Data Owner: A business leader (e.g., VP of Marketing) who is accountable for a specific data domain (e.g., customer data). They have final authority on access and usage policies.

- Data Steward: A domain expert, often from the business unit, responsible for the day-to-day management of data quality, definitions, and metadata for their domain.

- Data Custodian: The technical team (data engineers) responsible for the secure transport, storage, and processing of the data, implementing the policies defined by owners and stewards.

This structure clarifies decision-making. To enforce it, a robust change management plan is essential, ensuring that platform modifications are introduced in a controlled, predictable manner that protects the integrity of the data ecosystem.

Key Questions for Engineering Leaders

These are the most common questions from leaders managing data platform projects, with direct, evidence-based answers.

What is a realistic timeline for a data platform MVP?

For a mid-sized enterprise building a core data platform MVP on Snowflake or Databricks, a realistic timeline is four to six months. Any proposal promising a fully governed platform in under three months is either drastically under-scoped or being presented by an inexperienced team.

This four-to-six-month timeline allows for the delivery of tangible value, typically scoped to:

- Ingestion of 3-5 critical data sources.

- Development of a foundational data model.

- Delivery of 1-2 high-impact business intelligence dashboards or data marts.

A typical project plan is structured as follows:

- Months 1-1.5 (Discovery & Design): Lock down requirements, finalize architecture, and develop a detailed, milestone-based project plan.

- Months 1.5-6 (Iterative Build & Deploy): Execute in an agile rhythm, building data pipelines and models, and delivering functionality to stakeholders for continuous feedback.

How much should we budget for a data engineering consulting engagement?

According to our analysis of 86 data engineering firms, the blended hourly rate for a US-based team of experienced consultants ranges from $150 to $250.

For a standard four-month (16-week) MVP project with a lean team of three engineers, the consulting budget will fall between $288,000 and $480,000. This figure is for consulting services only and does not include cloud consumption costs, which must be budgeted separately.

Insist on a detailed Statement of Work (SOW) that ties payments to the delivery of specific milestones. This provides superior budget control compared to an open-ended time-and-materials contract.

Key Takeaway: Be highly skeptical of proposals significantly below this cost benchmark. In data engineering, rock-bottom rates are a direct indicator of junior talent. This creates short-term savings but incurs substantial long-term costs from architectural errors, technical debt, and extensive rework.

Stop wasting months on vendor research. DataEngineeringCompanies.com gives you expert rankings, verified firm profiles, and practical tools to find the right data engineering partner in days, not weeks. Find your ideal data consultancy today.

Data-driven market researcher with 20+ years in market research and 10+ years helping software agencies and IT organizations make evidence-based decisions. Former market research analyst at Aviva Investors and Credit Suisse.

Previously: Aviva Investors · Credit Suisse · Brainhub · 100Signals

Top Data Engineering Partners

Vetted experts who can help you implement what you just read.

Related Analysis

The Engineering Leader's Guide to Supply Chain Data Platforms

Explore expert supply chain data engineering strategies for resilient pipelines, modern architecture, and data platform selection for Snowflake and Databricks.

Airflow vs Prefect vs Dagster: A 2026 Decision Guide for Engineering Leaders

A definitive guide to Airflow vs Prefect vs Dagster for enterprise data teams in 2026. Make the right choice for your data platform and avoid technical debt.

Data Contracts in Data Engineering: A Guide for Engineering Leaders

Explore data contracts in data engineering to enforce agreements, prevent pipeline failures, and boost data reliability across Snowflake and Databricks.