Data Catalog Tools Comparison for Engineering Leaders

Selecting a data catalog is not about finding the ‘best’ tool; it’s about selecting the right one for your data platform, governance model, and engineering team. This decision is foundational, directly impacting developer productivity, compliance adherence, and the speed at which your organization can leverage data. The core problem is choosing a tool that aligns with your primary business driver: accelerating developer velocity, enforcing strict compliance, or enabling broad self-service analytics.

A Decision Framework for Data Catalog Selection

The data catalog market is segmented into three distinct philosophies, each built around a primary business objective. A data catalog tools comparison reveals these divergent approaches, and understanding your primary objective is the first step toward a successful vendor evaluation.

- Modern Self-Service Catalogs: Tools like Atlan are engineered for the modern data stack—Snowflake, Databricks, and dbt. They prioritize developer experience, API-first integration, and agile collaboration.

- Traditional Governance Platforms: Solutions such as Collibra are designed for highly regulated environments. Their architecture is built for top-down control, featuring robust stewardship workflows and rigid policy enforcement for auditability.

- Active Metadata Platforms: Platforms like Alation use AI to analyze data usage patterns and automate curation. Their objective is to bridge the gap between technical data producers and business data consumers.

The most common failure pattern is selecting a tool based on a feature matrix. This leads to deploying a governance-first platform that throttles an agile data team, or a developer-centric tool that fails basic compliance audits.

Core Decision Drivers: An Evaluation Checklist

Frame your evaluation around a primary objective. Are you optimizing for engineering velocity, securing sensitive data, or empowering business analysts with self-service capabilities? Each goal dictates a different type of catalog.

| Primary Driver | Description | Best-Fit Catalog Type | Key Functionality |

|---|---|---|---|

| Developer Productivity | Accelerate data discovery and reduce time-to-insight for engineers and analysts on a modern data stack. | Modern Self-Service | Deep dbt/Airflow integration, column-level lineage, API-first architecture. |

| Strict Compliance | Enforce auditable data policies and manage risk in regulated industries like finance or healthcare. | Traditional Governance | Formal stewardship workflows, role-based access controls (RBAC), automated policy enforcement. |

| Broad User Adoption | Provide non-technical users with trusted, self-service access to data for analytics and reporting. | Active Metadata | AI-driven search, automated documentation, embedded collaboration tools. |

This framework provides the criteria for a direct, head-to-head comparison of leading vendors based on their ability to solve specific, high-value business problems.

The Data Catalog Market Landscape: Cloud-Native vs. Legacy

Selecting the wrong data catalog today ties your data strategy to a depreciating asset. A poor choice wastes budget and anchors your platform to obsolete technology as the market advances. The market is being reshaped by cloud-native architecture, active metadata, and AI-driven automation, creating a clear performance gap between modern and legacy solutions.

The primary force driving this transformation is the exponential growth of enterprise data, which is projected to reach 181 zettabytes by 2025. Manual data management is no longer feasible. In response, the global data catalog market is projected to expand from USD 1.15 billion in 2025 to USD 6.18 billion by 2033, a compound annual growth rate (CAGR) of 23.4%. This growth underscores the urgency of conducting a rigorous data catalog tools comparison. For a detailed market breakdown, you can explore the full market analysis and its drivers.

The Cloud-Native Imperative

Legacy, on-premise catalog solutions are obsolete. The market has decisively shifted to the cloud. Any vendor whose roadmap is not deeply integrated with Snowflake, Databricks, dbt, and major cloud providers (AWS, Azure, GCP) is a high-risk, long-term investment.

According to DataEngineeringCompanies.com’s analysis of 86 data engineering firms, projects using cloud-native data catalogs achieved initial rollout 35% faster than those implementing traditional tools. This is because cloud-native platforms are architected to integrate seamlessly with the modern data stack, eliminating costly and time-consuming custom integration work.

The greatest risk in vendor selection is choosing a “cloud-agnostic” tool that is a monolithic on-premise application ported to a cloud server. A true cloud-native catalog leverages the elasticity and native services of its host platform to deliver scale and performance that legacy architectures cannot match.

The Rise of Active Metadata and AI

The market has bifurcated into passive, static catalogs and active, intelligent platforms. Passive catalogs function as a simple inventory system for data assets—they document what exists and where.

Active catalogs are fundamentally different. They use AI and machine learning to automate metadata discovery, recommend data quality improvements, and orchestrate governance workflows. The competitive frontier is now defined by AI-driven features like automated column-level lineage, PII detection, and semantic search. These capabilities directly address the primary barrier to catalog adoption: the high cost and manual effort of curation. Selecting a tool without this intelligence is an investment in future technical debt.

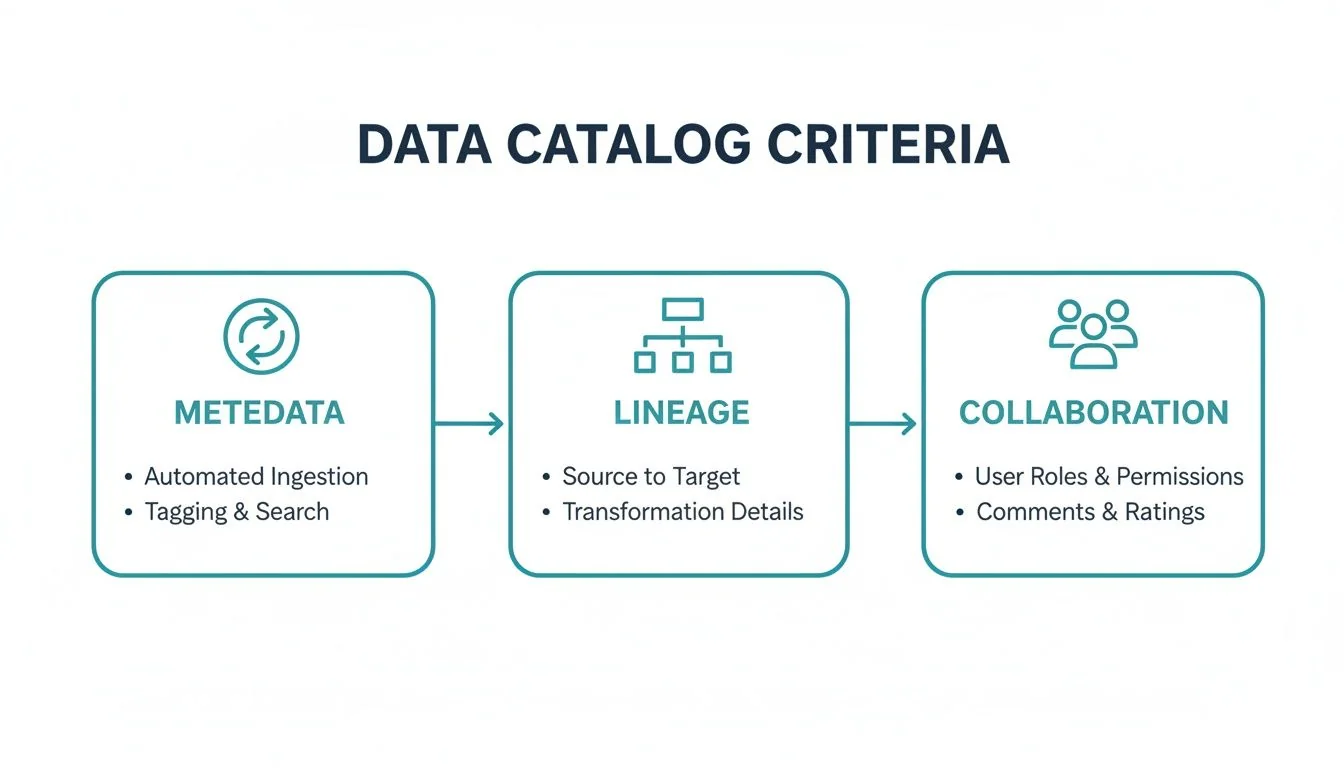

Core Evaluation Criteria For Enterprise Data Catalogs

Evaluating enterprise data catalogs requires moving beyond feature lists to assess each tool against the real-world operational challenges your data engineering team faces. This requires a consistent evaluation framework based on five non-negotiable technical pillars.

Metadata Management and Automation

A catalog’s core function is metadata management, but the key differentiator is automation. The platform must support automated, bi-directional metadata synchronization. This means it not only ingests metadata from your data stack but also pushes curated context, such as business definitions and quality scores, back into the tools your team uses daily.

Data Lineage and Impact Analysis

Visual lineage graphs are table stakes. The critical capability is column-level lineage tracking across complex transformations and disparate systems—from a source database, through dbt models, to a BI dashboard. For a more detailed breakdown, learn more about data lineage tools in our comparison guide.

A critical test for any data catalog is its impact analysis capability. If a data pipeline fails or a column is deprecated, the tool must instantly identify every downstream dashboard, report, and data product affected. A “no” answer indicates it fails a fundamental enterprise requirement.

Collaboration and Stewardship

A data catalog that functions as a read-only library is a failed investment. It must be an active hub for your data culture. The platform must enable data stewards to curate assets, certify datasets, and resolve issues directly within the tool. Look for persona-based workflows, integrated ticketing, and discussion threads tied to specific data assets.

Integration and Extensibility

Deep, native connectors are non-negotiable. Your catalog must integrate flawlessly with your core data stack, including platforms like Snowflake, Databricks, dbt, and Airflow. These integration points are crucial, especially for teams engaged in a Snowflake partner collaboration. A powerful and well-documented open API is essential for custom integrations and automating unique governance workflows.

Security and Governance Enforcement

A modern data catalog must actively enforce governance policies, not just document them. It must be an integrated component of your security posture.

Key capabilities include:

- Automated PII/sensitive data classification that can trigger specific access policies.

- Role-based access controls (RBAC) that propagate permissions from the catalog to the underlying data sources.

- Detailed audit logs that provide an immutable record of data access.

A Head-To-Head Data Catalog Tools Comparison

This section provides a direct comparison of Alation, Collibra, Atlan, and Informatica based on criteria relevant to engineering leaders. We analyze the core architectural philosophy of each platform, from Collibra’s top-down governance model to Atlan’s active metadata approach.

An effective data catalog must deliver on three fronts: automated metadata management, clear data lineage, and collaborative features that enhance productivity.

The optimal tool balances technical capabilities (metadata sync, lineage tracking) with the human element (empowering analysts and data stewards).

Architectural Philosophies and Core Strengths

The fundamental difference between these platforms is their architectural DNA, which dictates their ideal use case and target user.

-

Collibra & Informatica: These are governance platforms built for regulated industries like finance and healthcare, where top-down control and auditability are paramount. Their strength lies in managing complex stewardship workflows and enforcing data policies.

-

Alation: This tool pioneered the concept of “active” metadata, using AI to understand data usage patterns and automate curation. It is designed to bridge the gap between technical and business users, making it a strong choice for organizations focused on driving self-service analytics.

-

Atlan: Engineered for the modern data stack, Atlan is a developer-first, API-driven platform. It employs a bottom-up, collaborative model that aligns with the workflows of modern data teams. Deep integrations with tools like dbt, Snowflake, and Slack make it a leading contender for agile organizations prioritizing engineering velocity.

Informatica holds an estimated 18-22% global market share, while Alation follows at 14-18%, particularly in the self-service intelligence segment. While established vendors like IBM and Microsoft are significant players, modern platforms like Atlan—recognized as a leader in recent Gartner MQ and Forrester Wave reports—are gaining market share with rapid, weeks-long deployments.

Data Catalog Tool Comparison Matrix

This matrix provides a side-by-side evaluation of Alation, Collibra, Atlan, and Informatica across key criteria for enterprise adoption.

| Evaluation Criterion | Alation | Collibra | Atlan | Informatica |

|---|---|---|---|---|

| Metadata Management | AI-driven automation, strong behavioral intelligence | Governance-centric, manual curation focus | Active metadata, API-first, automated bots | Strong but part of a larger, monolithic platform |

| Data Lineage | Good cross-system lineage | Strong on governance lineage, less on BI tools | Excellent column-level lineage, deep BI/ETL integration | Excellent end-to-end, part of broader MDM suite |

| Collaboration & Search | Strong natural language search and wiki-like articles | Formalized stewardship workflows | Embedded in Slack/Jira, contextual discussions | Search is functional but less intuitive |

| Modern Stack Integration | Good, improving connectors | Fair, often requires custom development for new tools | Excellent, deep integrations with dbt, Fivetran, etc. | Lagging on modern tools, strong on traditional ETL |

| Deployment & Scalability | SaaS or self-hosted, scales well with usage | Primarily self-hosted/VPC, complex to deploy | SaaS-native, fast deployment, scales horizontally | Can be complex, often requires professional services |

| User Experience (UX) | Business-user friendly, intuitive | Governance-focused, can be complex for analysts | Developer-centric, designed for modern workflows | Traditional enterprise UI, functional but dated |

| Primary Use Case | Self-Service Analytics & Data Democratization | Enterprise Data Governance & Compliance | Agile Data Teams & Modern Data Stack | Master Data Management & Large-Scale Governance |

This matrix shows that the “best” tool is contingent on your organization’s primary objective—whether strict governance, agile development, or broad business adoption. No single tool excels in every category.

Atlan’s deep integration with tools like Slack, Jira, and dbt is a deliberate strategy to embed the data catalog into the daily workflow of a modern data team. This contrasts sharply with the siloed, governance-centric interfaces of traditional platforms.

To contextualize this evaluation within the broader data ecosystem, similar frameworks can be found in related tool comparisons. For example, guides like the 12 Best Data Pipeline Tools for Analytics offer a comparable assessment methodology for a different part of the data stack.

Use Case Deep Dive: Matching The Tool To The Job

A feature-by-feature comparison is insufficient. A successful evaluation matches a tool’s core architecture to your primary use case. The optimal tool is the one that aligns with your company’s operational reality. The key is to assess solutions against specific, real-world engineering challenges.

The following three scenarios represent common challenges for engineering leaders. For each, we recommend a tool based on its architecture, integrations, and governance model.

The Modern Data Stack Scale-Up

This scenario involves an agile team operating on the modern data stack: Snowflake, dbt, and modern BI tools. The primary objective is developer velocity. Any solution requiring heavy manual curation or rigid, top-down workflows is unacceptable.

- Top Recommendation: Atlan

- Rationale: Atlan is architected for this environment. Its API-first design and deep dbt integration (capturing both metadata and lineage) are native to the modern stack. It embeds collaboration within Slack and Jira, accelerating workflows for fast-moving teams. The platform’s active metadata capabilities automate discovery and documentation, reducing the manual effort that impedes momentum.

The Enterprise Governance Overhaul

This scenario involves a large enterprise in a regulated industry like finance or healthcare. The mission is auditable, top-down governance. The focus is on compliance, data quality assurance, and strict access controls.

- Top Recommendation: Collibra

- Rationale: Enterprise governance is Collibra’s core competency. The platform is built around formal stewardship workflows, complex policy enforcement, and establishing an authoritative business glossary. While less agile, it delivers the centralized control and detailed audit trails that regulators demand. Its primary strength is modeling and managing the complex governance structures of a large, mature organization.

For teams on Databricks, the evaluation is more nuanced. A Databricks Unity Catalog implementation is a critical consideration, as its native capabilities for core governance and lineage can significantly alter the business case for third-party tools.

The Federated Data Mesh Implementation

In this scenario, a large enterprise is decentralizing data ownership to individual business domains. The challenges are enabling data discovery across domains, ensuring interoperability, and maintaining consistent quality standards for “data products” without a central bottleneck.

- Top Recommendation: Alation

- Rationale: Alation excels in federated environments. It uses AI to analyze data usage, connecting disparate data sources and bridging knowledge gaps between domains. Its strong search and discovery capabilities, combined with a user-friendly interface, create a central “marketplace” for data products. This empowers domain teams to own their data while providing a unified discovery layer for the entire organization.

Red Flags and Next Steps in Your Evaluation

Selecting a data catalog is a significant long-term commitment. A wrong choice results in months or years of wasted effort. During the RFP and demo process, it is critical to identify vendor red flags. Vague answers on data lineage for your specific stack, intentionally confusing pricing models, or a closed-ecosystem approach with poor API documentation are all serious warnings of future operational friction.

If a vendor cannot demonstrate a live connection to your primary data sources, it is a deal-breaker. The same applies to pricing. If calculating the total cost of ownership requires multiple calls and a complex spreadsheet, the vendor is likely obscuring costs. Be wary of any vendor that resists providing a sandbox environment for hands-on evaluation by your team.

Your Procurement Checklist

Equip your procurement team with direct questions to move past the sales pitch and assess the vendor as a potential long-term partner.

Key Questions for Vendors:

- Lineage Specificity: “Demonstrate column-level lineage from our source system X, through our transformation tool Y, and into our BI tool Z. We need to see this working with our actual stack.”

- Pricing Transparency: “Provide the three-year, total cost of ownership for our projected usage. This must include all connector fees, support tiers, and user licenses. What is excluded from this quote?”

- Implementation Reality: “We require a reference call with a customer of similar size and tech stack. How long was their implementation timeline from contract signing to first value delivered?”

- API and Extensibility: “Provide the documentation for your public APIs. Show us examples of how other customers have extended your platform to handle custom metadata or build custom governance workflows.”

A common sales tactic is to make vague promises about “future roadmap support” for your core tools. If the vendor cannot demo a connector today, assume it does not exist for your evaluation.

Structuring Your Next Steps

The next step is to execute a focused proof of concept (POC) with your top two vendors. Select a single, high-value business problem to solve, such as tracing a critical KPI from its source to a C-level dashboard. This creates a clear, apples-to-apples benchmark for your final decision and provides a faster path to a definitive answer.

Frequently Asked Questions

Here are direct answers to common questions from engineering leaders evaluating data catalogs.

How Long Does It Take To Implement A Data Catalog?

Implementation timelines depend entirely on the tool and the scope of the initial project. Modern, SaaS-first catalogs like Atlan can be operational in weeks, especially when focused on high-impact sources like Snowflake or dbt. The goal is iterative value delivery.

In contrast, traditional, governance-first platforms like Collibra typically require a 6-12 month implementation cycle involving extensive workflow configuration and significant organizational change management.

The primary driver is scope. A pilot project targeting a single business-critical data source can deliver value quickly. Attempting to catalog an entire enterprise data lake from day one is a multi-quarter initiative.

What Is The Difference Between An Active And Passive Data Catalog?

A passive data catalog is a read-only inventory of data assets. It collects metadata to provide a static reference but does not interact with that information.

An active data catalog uses open APIs to create bi-directional metadata flow. It not only collects context but also pushes it back into the tools your team uses, such as BI dashboards and SQL editors. This enables features like displaying business definitions or data quality warnings directly within a user’s workflow. An active catalog also automates governance by using metadata to trigger actions, making it an operational hub within a modern data stack.

How Should We Measure The ROI Of A Data Catalog?

ROI measurement requires a combination of quantitative and qualitative metrics.

- Quantitative Metrics: The most direct metric is time saved in data discovery. A successful implementation will also reduce data-related support tickets and accelerate the onboarding of new data team members.

- Qualitative Metrics: Increased data trust, measured through internal surveys, is a key indicator. You will also see higher usage of certified and governed data assets. Ultimately, these factors lead to faster delivery of new data products and analytics projects.

A well-executed data catalog implementation should reduce data discovery time by 30-40% within the first year. Failure to achieve this indicates a need to re-evaluate your adoption strategy.

Navigating the complex landscape of data engineering vendors is a critical challenge. DataEngineeringCompanies.com provides transparent, data-driven rankings of top firms, complete with rate benchmarks, verified capabilities, and RFP tools to help you select the right partner with confidence. Find your ideal data engineering partner today.

Data-driven market researcher with 20+ years in market research and 10+ years helping software agencies and IT organizations make evidence-based decisions. Former market research analyst at Aviva Investors and Credit Suisse.

Previously: Aviva Investors · Credit Suisse · Brainhub · 100Signals

Top Data Engineering Partners

Vetted experts who can help you implement what you just read.

Related Analysis

A CTO's Guide to Databricks Unity Catalog Implementation

A proven guide for CTOs on Databricks Unity Catalog implementation. Get actionable frameworks for architecture, governance, migration, and CI/CD.

The CIO's Guide to Master Data Management Consulting

Discover how master data management consulting can unlock value, evaluate firms, and structure engagements for measurable data results.

A Pragmatic Guide to Data Engineering Project Management

Master data engineering project management with this playbook. Learn proven strategies for on-time delivery of complex data pipelines and cloud platforms.