Data Reliability Engineering A Guide for CTOs

If your data team spends its week babysitting broken pipelines, late tables, and executive dashboards that don’t reconcile, you don’t have a tooling problem. You have a reliability problem.

That distinction matters when you’re hiring a consulting partner. Plenty of firms can stand up Snowflake, Databricks, dbt, Airflow, BigQuery, or a lakehouse on AWS and call it a success. Far fewer can build a platform that stays trustworthy after the implementation team leaves.

I’ve seen both outcomes. The first implementation looked polished in demos and collapsed under production pressure because nobody owned freshness, incident response, or failure patterns. The second worked because we treated data reliability engineering like an operating model, not a feature. That’s the bar you should use when evaluating consultants for enterprise data engineering, cloud migration, and data pipeline architecture.

Your Data Team Is Drowning in Downtime

Data scientists and data engineers spend 30% or more of their time firefighting data downtime incidents instead of creating stakeholder value, according to Monte Carlo’s write-up on data reliability. That’s the number that should reset this conversation.

Most leadership teams still frame broken data as a quality issue. They’re wrong. A one-time data check doesn’t solve a recurring failure mode. If your Airflow DAG succeeds but the upstream schema changed, your dbt models still build garbage. If your Snowflake load finishes but your executive KPI arrives late, the business still experienced downtime.

What DRE actually fixes

Data reliability engineering is the discipline of making data systems predictably trustworthy over time. Not once. Every run.

That means a real DRE practice focuses on questions like these:

- Freshness: Is the critical table updated when the business needs it?

- Completeness: Did all expected records land?

- Schema stability: Did upstream producers break the contract?

- Incident handling: Who gets paged, how fast, and what happens next?

- Recovery: Can the team isolate and fix the issue without a war room?

Bad consulting quickly reveals its flaws. Weak firms implement dashboards and call them observability. They scatter ad hoc tests in dbt and call it governance. They hand you a runbook no one will use and call it operational readiness.

Practical rule: If the partner can’t define what “data downtime” means for your highest-value pipelines, they’re selling data quality theater.

Stop accepting reactive operations

The right posture is proactive. You instrument critical assets, set reliability expectations, and use monitoring that catches drift before a CFO catches it in a board deck.

For teams that want a practical view of how proactive monitoring works, this overview of automated anomaly detection is useful because it shows the shift from manual checking to system-driven detection.

The buying implication is simple. If you’re selecting a consultancy for Snowflake, Databricks, AWS, Azure, or BigQuery modernization, DRE belongs in the scope from day one. Not after migration. Not after the first outage. Day one.

DRE vs SRE vs Data Engineering Clarifying Roles

Teams often struggle with data reliability engineering because accountability is muddy. The data engineers think platform reliability belongs to SRE. The SRE team thinks data validity belongs to analytics engineering. Everyone is partly right, which means nobody is fully responsible.

The cleanest way to split responsibility

Altimetrik’s DRE overview puts the distinction clearly: Data Reliability Engineering is a specialized subset of Data Operations, while DataOps covers broader concerns such as cost management and access controls. DRE owns test automation, SLO creation, incident management, and root cause analysis.

Here’s the practical breakdown.

| Function | Primary concern | Typical ownership | Failure they care about |

|---|---|---|---|

| Data Engineering | Build and maintain pipelines, models, and platform integrations | Data engineers, analytics engineers | Jobs fail, transformations break, dependencies drift |

| SRE | Keep services and infrastructure available and performant | Platform engineering, SRE | Compute outage, orchestration instability, service latency |

| DRE | Keep data products reliable over time | DRE lead, senior data/platform team, or embedded reliability owners | Stale data, silent bad data, broken contracts, recurring incidents |

What this means in real delivery work

In a modern stack, the boundaries are straightforward:

- Data engineering builds the path. That includes ingestion, transformation, orchestration, and warehouse design.

- SRE keeps the runtime healthy. Think cluster stability, cloud resource posture, service uptime, and platform alerts.

- DRE protects the data promise. Freshness, completeness, quality over time, and repeatable recovery.

If your consultant says “our engineers do all of that,” press harder. Generalists can launch fast, but reliability failures usually hide in the handoffs.

The anti-pattern to avoid

The most common failure pattern looks like this:

- A consultancy builds ingestion into Snowflake or Databricks.

- They add a few dbt tests.

- They deploy Airflow or managed orchestration.

- They leave without reliability ownership, escalation rules, or data SLOs.

That isn’t a DRE practice. That’s a project handoff with optimism.

Data reliability engineering starts where implementation-only consulting usually stops.

Ask every partner where DRE lives after go-live. If they can’t point to named responsibilities across platform, pipelines, and incident response, expect the burden to fall back on your internal team.

The Core Principles of a DRE Practice

A real DRE practice is built on a small set of operating principles. None of them are glamorous. All of them matter.

Start with SLOs that the business actually feels

If your main revenue dashboard must be ready before executives start the day, state it as an explicit reliability target. Example: critical ETL jobs complete before the reporting window, or freshness stays under the threshold the business needs.

That’s what separates DRE from vague quality goals. Engineers need a target they can monitor and defend.

Test pipelines before production embarrasses you

Most firms over-index on production monitoring and under-invest in testing. That’s backwards.

Your partner should build:

- Schema tests in dbt or equivalent transformation layers

- Pipeline tests around ingestion and orchestration logic

- Contract checks between source systems and downstream consumers

- Deployment gates in CI/CD before changes hit production

If they only talk about dashboard validation, they’re inspecting outputs too late. Useful references on data integration best practices help here because reliable movement between systems starts with contracts, validation, and repeatable orchestration.

Observability needs lineage and context

Alerts without context are noise. Good DRE instrumentation tells you what broke, what changed, which downstream models are affected, and who owns the fix.

That’s why observability should cover:

- freshness

- volume anomalies

- schema drift

- lineage impact

- incident routing

If you want the shortest explanation of where this fits, this overview of https://dataengineeringcompanies.com/insights/what-is-data-observability/ is a solid companion.

The first useful alert answers two questions immediately. What failed, and who has to act?

Incident response has to be boring

That’s a compliment. Mature reliability work is procedural.

For every critical pipeline, your consultancy should define:

| Element | What good looks like |

|---|---|

| Trigger | Alert tied to a specific failure condition |

| Owner | Named responder, not a shared inbox |

| Triage | Clear first checks and dependency map |

| RCA | Root cause documented and reviewed |

| Prevention | Test, monitor, or design change added after the incident |

Use statistics where they improve operations

Reliability engineering isn’t guesswork. The Weibull distribution is a key tool for modeling failure rates with shape and scale parameters. In practice, that makes it useful for predicting pipeline failures and setting statistically grounded uptime targets such as 99.9%.

You don’t need a consultant who throws equations into slides. You need one who can use failure history to set sensible thresholds, distinguish noisy anomalies from real risk, and tune alerts so the team trusts them.

Assessing Your DRE Maturity Level

Most organizations think they’re further along than they are. They’ve got dbt tests, some Datadog dashboards, maybe a Slack alert for failed jobs. That usually puts them in the reactive middle, not in a mature state.

Use this model to judge your current posture before you hire anyone.

DRE maturity model

| Dimension | Level 0 Ad-Hoc | Level 1 Reactive | Level 2 Proactive | Level 3 Automated |

|---|---|---|---|---|

| Testing | Manual spot checks after issues surface | Basic tests on selected models | Standardized tests across critical pipelines | Test coverage embedded in CI/CD with enforced gates |

| Observability | Teams notice issues from users or dashboards | Alerts on failed jobs only | Monitoring covers freshness, volume, schema, and lineage on priority assets | Detection, triage context, and routing are automated |

| Incident response | No runbooks, heroics drive recovery | Tickets and chats coordinate response | Defined runbooks and RCA process for major failures | Incident workflows trigger ownership, escalation, and post-incident fixes automatically |

| Ownership | Responsibility is shared and unclear | Platform or data team informally handles incidents | Named owners exist for core datasets and pipelines | Ownership is mapped by asset, dependency, and business criticality |

| Governance | Policies exist on paper | Some documentation and access controls | Reliability standards align with governance processes | Governance, lineage, and operational controls are connected in one operating model |

| Consulting partner fit | Partner talks tools | Partner offers monitoring setup | Partner can define SLOs and incident process | Partner delivers reliability as an embedded operating capability |

How to use the model honestly

Don’t average yourself upward. A team with solid dashboards and weak incident ownership isn’t “advanced.” It’s uneven.

Score each domain separately and look for the bottleneck. In most organizations, one of these is the primary blocker:

- No named owner for critical datasets

- No service level expectations for freshness or completeness

- No repeatable RCA discipline

- No production-aware testing before releases

- No distinction between platform uptime and data reliability

What buyers get wrong

Leaders often choose a consultancy based on platform logos and migration references. That tells you whether they can build. It doesn’t tell you whether they can stabilize.

Buy for your weakest operational muscle, not for the prettiest architecture diagram.

If your team is Level 0 or Level 1 in incident response and ownership, you don’t need more generic implementation capacity. You need a partner that can establish operating discipline around Snowflake tasks, Databricks jobs, Airflow DAGs, dbt deployment workflows, and governance controls that survive change.

A Phased Roadmap for DRE Implementation

Don’t try to boil the ocean. The fastest way to kill data reliability engineering is to announce an enterprise program, buy a new observability tool, and leave ownership unresolved.

A phased rollout works because it forces hard prioritization.

Phase 1 Stabilize the critical path

Start with the assets that hurt the business when they fail. Usually that means revenue reporting, finance, customer operations, or model inputs for production systems.

Focus on these moves first:

- Pick critical pipelines: Name the jobs, tables, and dashboards that matter most.

- Set basic reliability expectations: Define what “on time” and “usable” mean for each one.

- Create runbooks: Give responders a first-step checklist for common failures.

- Instrument core alerts: Watch freshness, job failure, and schema changes on those assets.

This phase is where consulting partners earn trust. If they insist on broad platform rollout before identifying your critical path, they’re optimizing for billable scope.

Phase 2 Build repeatability into delivery

Once the main pain is visible, move upstream into engineering workflow.

That means:

| Area | What to implement |

|---|---|

| CI/CD | Tests and deployment gates for dbt, SQL, and pipeline changes |

| Contracts | Clear source-to-target expectations for schemas and critical fields |

| Ownership | Dataset and pipeline owners documented in the platform or catalog |

| RCA | Standard post-incident reviews tied to preventive actions |

This is the point where DRE stops being an operations patch and becomes part of how your data platform ships changes.

Phase 3 Add predictive and adaptive controls

Only after the basics are stable should you add advanced capabilities.

That includes anomaly detection, richer lineage-driven triage, and selective automation that can isolate or quarantine bad data before it spreads. In Databricks environments, that often means tightening reliability around streaming or model-serving dependencies. In Snowflake-centric stacks, it often means hardening orchestration and downstream consumption patterns.

What to demand from a consultancy in each phase

A good partner should produce tangible artifacts, not vague progress reports:

- Phase 1: asset inventory, alert map, runbooks, ownership matrix

- Phase 2: test strategy, CI/CD controls, SLO definitions, RCA template

- Phase 3: anomaly policy, lineage-based escalation, selective self-healing design

If they can’t tell you what they’ll leave behind after each phase, you’re buying activity, not capability.

Calculating the ROI of Data Reliability

Most DRE business cases are weak because they rely on trust language. Trust matters. It doesn’t secure budget.

The strongest case is operational. Faster detection and faster resolution free engineering time, reduce executive disruption, and cut the downstream rework that follows bad data.

Use a simple ROI frame

A 2025 Forrester report highlighted in Metaplane’s DRE discussion says firms adopting DRE observability see 25% to 40% faster issue resolution, yet only 15% of mid-market data teams report having ROI calculators to justify the investment.

That gap is your opening. Teams often feel the pain and still fail to quantify it.

Build the case from four buckets:

- Engineering time recovered: hours currently spent on triage, reruns, root cause hunts, and manual reconciliation

- Business interruption avoided: delayed reporting, broken downstream processes, executive escalations

- Rework reduced: repeated fixes for recurring incidents

- Consulting and tooling investment: implementation, enablement, and operating overhead

What to measure before the project starts

If you don’t baseline these, your consultancy can claim success without proving it.

Track:

| Metric | Why it matters |

|---|---|

| Time to detect | Shows whether monitoring is working |

| Time to resolve | Captures operational drag |

| Incident recurrence | Reveals whether RCA changes are sticking |

| Critical asset downtime | Ties reliability to business-facing systems |

| Engineer effort on firefighting | Shows reclaimed capacity |

One practical explainer on the economics of reliability is below.

The procurement question that matters

Ask every consulting firm this: How will you prove that reliability improved in operational terms, not just tool adoption?

Good answers include baseline measurement, post-implementation comparisons, and a short list of target outcomes tied to your most important pipelines. Bad answers focus on feature rollout, dashboard counts, or “best practice alignment.”

If the partner can’t model ROI with your current incident load, your CFO won’t trust the proposal and your engineering team shouldn’t either.

DRE Patterns for AI/ML and Modernization Projects

AI and modernization projects expose weak data reliability engineering faster than classic BI ever did. Batch reporting can limp along with manual checks. Feature pipelines, near-real-time scoring, and GenAI retrieval flows can’t.

The pressure point is usually change. Source contracts move, schemas evolve, and model inputs drift until the team sees degraded outcomes.

Pattern one Schema contracts for moving sources

Bigeye’s write-up on the DRE role notes that 40% of data incidents in AI projects stem from undetected schema drifts. That’s not a niche problem. It’s the default failure mode in fast-moving product environments.

For modernization projects, enforce schema contracts at ingestion boundaries. Especially when you’re migrating from legacy ETL into dbt, Airflow, Snowflake, Databricks, or BigQuery.

What to require:

- versioned schemas for producer teams

- explicit handling of new, missing, or type-changed fields

- quarantine paths for invalid records

- alerts tied to contract breaches, not just failed jobs

Pattern two Focus monitoring on high-impact assets

The same source argues for 80/20 monitoring on high-impact tables, and reports that this can reduce ML retraining costs by 35%. That matches what experienced teams learn the hard way. Monitoring everything equally is lazy architecture disguised as completeness.

For AI/ML workloads, your high-impact assets are usually:

- feature tables feeding production models

- labels and training sets

- event streams used for online inference

- governance-sensitive joins and enrichment data

Watch the assets that change business outcomes, not the assets that are easiest to instrument.

Pattern three Reliability gates in modernization programs

Cloud migrations often fail after cutover because firms treat reliability as a post-migration optimization. It isn’t.

If you’re moving from on-prem ETL or fragmented warehouses into a modern stack, put these gates into the program plan:

- Critical pipeline reliability criteria before go-live

- Source and target reconciliation rules

- Runbooks for rollback, replay, and incident routing

- Ownership mapping across engineering, analytics, and platform teams

That’s especially important in regulated sectors, where the technical migration is only half the job. The other half is proving that the new platform behaves consistently under production change.

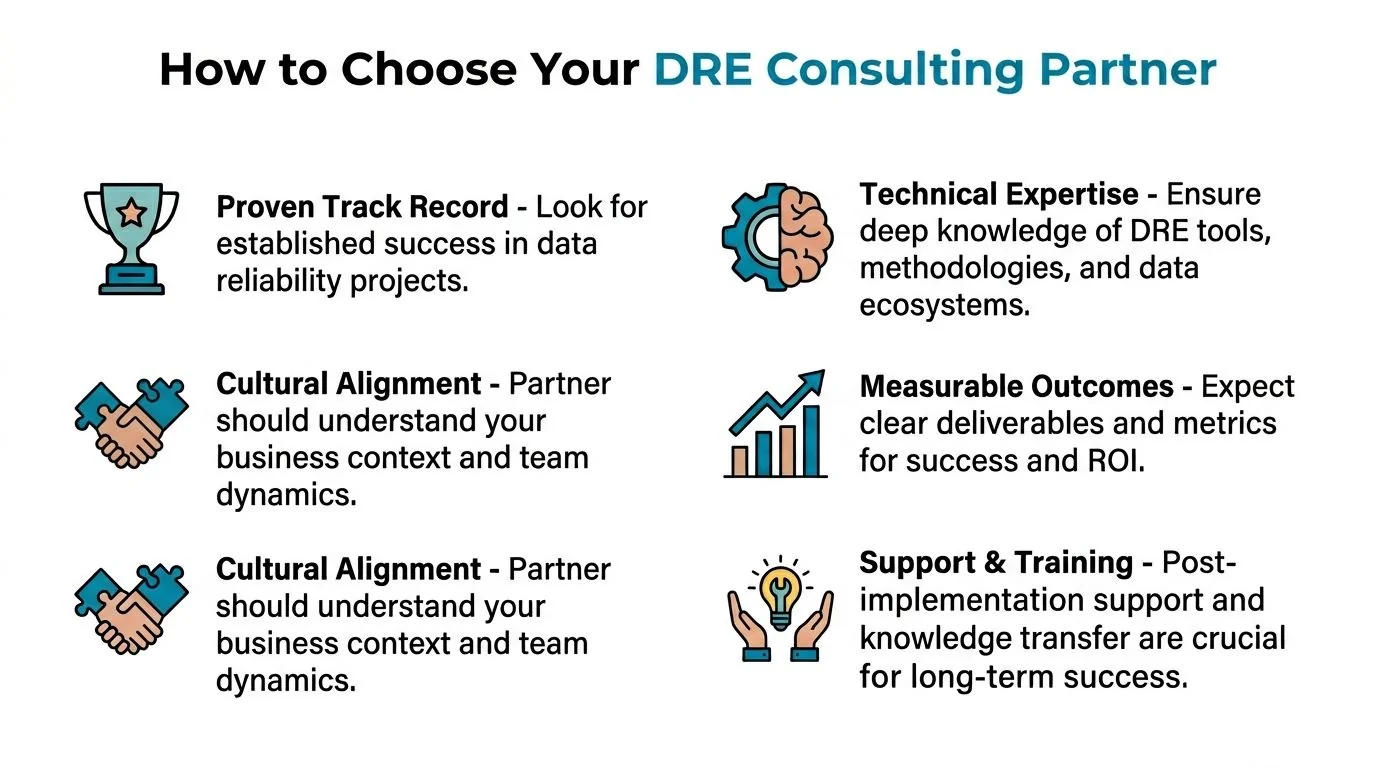

How to Vet a DRE-Capable Consulting Partner

Most consultancies now claim they do observability, governance, and reliability. Fine. Don’t ask whether they do DRE. Ask them to prove it.

The questions that expose substance fast

Use this checklist in your RFP or technical diligence sessions.

Delivery model

- Who owns data reliability after launch

- Which roles cover platform uptime, pipeline health, and data correctness

- What artifacts do you deliver besides code

Reliability design

- Show us a sample data SLO you’ve implemented

- How do you define and measure data downtime

- How do you distinguish job success from data success

Tooling and implementation

- How do you implement testing in dbt, orchestration, and CI/CD

- Which observability signals do you monitor on critical assets

- How do you map lineage from source breakage to downstream impact

Incident operations

- Show a sample runbook for a critical pipeline failure

- What does your RCA process look like

- How do you prevent repeat incidents after the fix

Knowledge transfer

- What training do you provide to internal owners

- What operating cadence do you recommend after handoff

- How do you avoid creating consultant dependency

The red flags

You should lower a firm’s score immediately if you hear any of these:

- “We’ll add tests later.” Reliability that starts after implementation usually never catches up.

- “Our platform partner handles that.” Tool vendors don’t own your operating model.

- “We monitor everything.” That usually means they haven’t prioritized business-critical assets.

- “We’ve done lots of migrations.” Migration experience isn’t proof of reliability engineering.

- “Your data engineers can absorb incident response.” They can, but then they stop building.

The right consulting partner leaves you with a stronger operating system, not just a working stack.

For teams formalizing vendor diligence, this checklist is worth using alongside your RFP process: https://dataengineeringcompanies.com/insights/data-engineering-due-diligence-checklist/

The next step is simple. Shortlist firms that can show DRE artifacts, not just platform certifications. If you’re comparing partners for Snowflake, Databricks, AWS, Azure, BigQuery, governance, or data pipeline architecture work, use DataEngineeringCompanies.com to screen providers by capability, delivery fit, and consulting scope before you start demos.

Researched & written by

Data-driven market researcher with 20+ years in market research and 10+ years helping software agencies and IT organizations make evidence-based decisions. Former market research analyst at Aviva Investors and Credit Suisse.

Previously: Aviva Investors · Credit Suisse · Brainhub · 100Signals

Vetted partners

Top Data Pipeline Partners

Vetted firms whose specialty matches this article.

More in Data Pipeline Architecture

Data Contracts in Data Engineering: A Guide for Engineering Leaders

Explore data contracts in data engineering to enforce agreements, prevent pipeline failures, and boost data reliability across Snowflake and Databricks.

What Is Data Fabric? A Practical Guide to Modern Data Architecture

Confused about what is data fabric? This guide explains its architecture, compares it to data mesh, and shows how it solves today's complex data challenges.

What Is Data Observability? A Practical Guide

Understand what is data observability and why it's crucial for reliable AI and analytics. This guide covers core pillars, KPIs, and implementation.