BigQuery vs Snowflake: An Engineering Leader's Decision Framework

The choice between BigQuery and Snowflake is not about feature lists; it’s a strategic decision rooted in platform architecture and its alignment with your core business workloads.

Snowflake is architected for performance isolation and multi-cloud deployment. This makes it the default choice for enterprises running predictable, high-concurrency business intelligence (BI) workloads where consistent query performance is non-negotiable.

BigQuery is built for serverless simplicity and deep integration within the Google Cloud Platform (GCP). It excels in environments focused on ad-hoc analytics, event-driven data processing, and machine learning, where operational overhead must be minimized.

The decision boils down to a core architectural trade-off: Do you require the granular control and performance isolation of dedicated compute clusters, or does the hands-off, auto-scaling nature of a serverless model better fit your operational goals and workload patterns? This guide provides the data points and frameworks to make that call.

Executive Decision Framework

This decision directly impacts total cost of ownership (TCO), operational load, and the productivity of your data teams. This is not a feature-by-feature bake-off but an alignment of platform architecture with your company’s cloud strategy, primary workloads, and financial model.

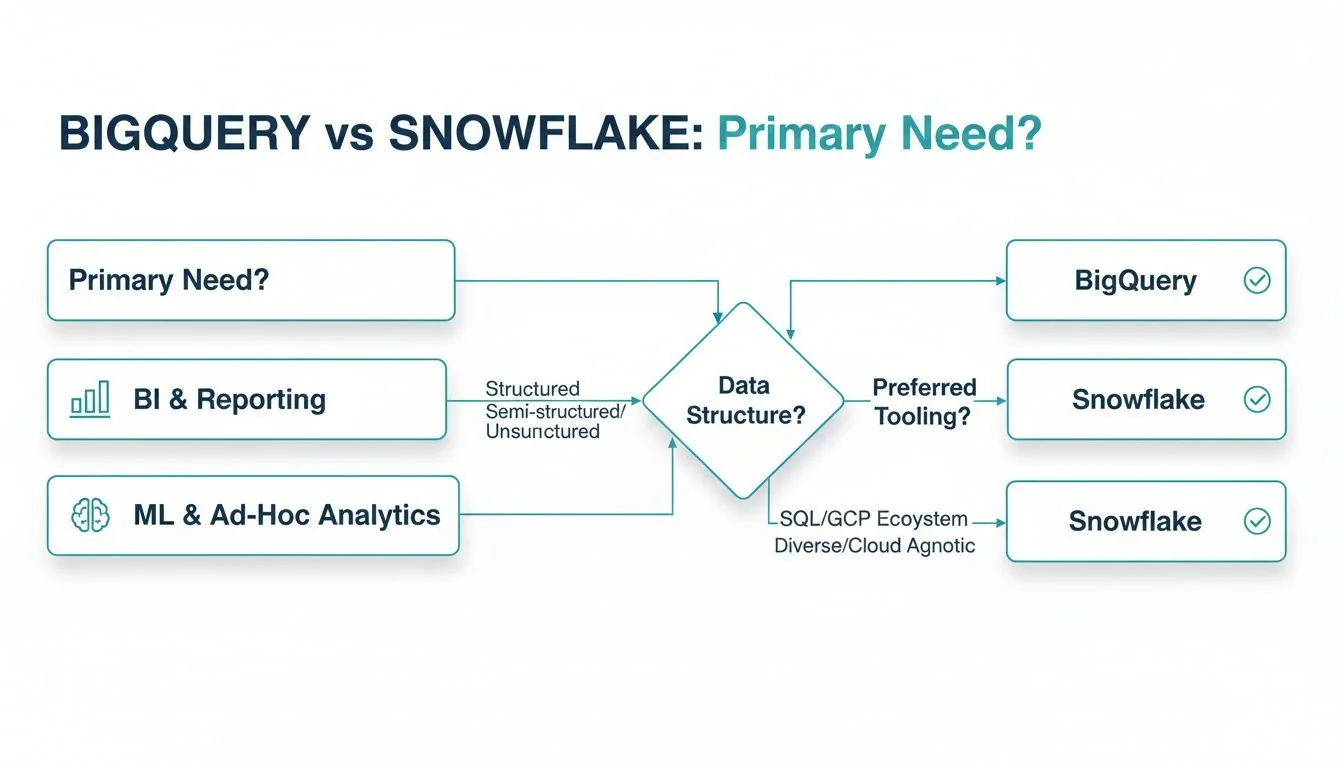

This decision tree outlines the two primary paths, guided by whether your priority is traditional BI or more exploratory, ad-hoc analytics and ML.

The flowchart shows a clear divergence. Organizations needing predictable BI performance and the flexibility of a multi-cloud or hybrid strategy will find Snowflake is the logical fit. Those standardized on GCP, with a heavy emphasis on ML and a need for powerful, simple ad-hoc querying, will gravitate toward BigQuery.

The following matrix maps specific organizational priorities to the platform that best serves them, acting as an evaluation checklist for leadership.

Executive Decision Matrix: BigQuery vs Snowflake

Use this matrix to align your organization’s strategic objectives with the correct data platform architecture.

| Decision Factor | Choose Snowflake If… | Choose BigQuery If… |

|---|---|---|

| Primary Cloud Strategy | You operate in a multi-cloud (AWS, Azure, GCP) or hybrid environment and require a platform that avoids vendor lock-in. | Your organization is standardized on Google Cloud Platform (GCP) and you require deep, native integration with its services (e.g., Vertex AI, Looker). |

| Key Workload | Your focus is high-concurrency enterprise BI and reporting, requiring consistent and predictable query performance for hundreds or thousands of users. | Your workloads are ad-hoc, exploratory, or ML-centric, benefiting from serverless auto-scaling and zero infrastructure management. |

| Cost Management Model | You prefer predictable costs tied to performance, using dedicated virtual warehouses that can be controlled and monitored for specific budgets. | You favor a pay-per-query model for unpredictable workloads or require a fixed-cost option with flat-rate pricing for predictable high volume. |

| Team Expertise & Ops | Your data engineering team is skilled in infrastructure management and requires fine-grained control over compute resources for performance tuning. | Your team’s priority is to eliminate infrastructure management to focus purely on analytics and data science outcomes. |

This framework provides a solid foundation for a decision that transcends a feature comparison. It focuses on selecting the platform that aligns with your team’s operational model and your business’s strategic goals.

Comparing Core Architecture and Performance

The fundamental architectural difference between BigQuery and Snowflake dictates their performance, concurrency, and operational models. The decision is a direct trade-off: the operational simplicity of a serverless approach versus the granular control of dedicated compute resources.

Snowflake is built on a multi-cluster, shared-data architecture. Storage and compute are fully decoupled, allowing independent scaling. You provision compute resources, called virtual warehouses, for specific teams or workloads. This isolation is critical for enterprise BI, as it prevents a massive data science query from impacting the performance of executive dashboards.

BigQuery is a serverless, multi-tenant architecture derived from Google’s internal Dremel technology. It abstracts away all resource management and scaling. There are no virtual warehouses to configure or manage. You execute your query, and BigQuery allocates the necessary compute from its massive, shared resource pool.

Performance and Concurrency Implications

This architectural divergence results in distinct performance profiles. With Snowflake, dedicated virtual warehouses deliver predictable performance and high concurrency. For thousands of concurrent users, this model provides consistent response times because workloads do not compete for resources. This reliability is a key reason Snowflake is a dominant choice for enterprise-wide BI deployments.

BigQuery’s serverless architecture excels with bursty, unpredictable workloads. Data science and ad-hoc analytics teams benefit from its auto-scaling capabilities without any infrastructure provisioning. The trade-off is that because it uses a shared resource pool, performance can fluctuate during periods of extreme, system-wide load, though this is rare in practice.

Key Takeaway: Snowflake offers performance isolation via manageable compute clusters, making it ideal for predictable enterprise BI. BigQuery provides effortless, hands-off scaling from a shared resource pool, which is perfect for unpredictable, exploratory analytics.

Regardless of platform, a deep understanding of the architecture of a data warehouse is essential. For BigQuery specifically, designing effective schemas for Google BigQuery is the single most important factor for controlling both performance and cost in its on-demand pricing model.

A Practical Breakdown of Total Cost of Ownership

A realistic Total Cost of Ownership (TCO) analysis is critical. The primary difference in the BigQuery vs. Snowflake TCO debate stems from their pricing models for compute resources.

Snowflake uses a credit-based system for compute, billed separately from storage. Google BigQuery offers two models: on-demand pricing (pay-per-terabyte scanned) or flat-rate pricing where you reserve compute capacity (“slots”). Let’s analyze two common business scenarios to see how these models perform.

For a mid-sized company with unpredictable, bursty data science workloads, BigQuery’s on-demand model is often more cost-effective. These teams may run compute-intensive queries, but not continuously. A pay-per-query approach aligns perfectly with this usage pattern.

In contrast, a large enterprise supporting consistent, high-concurrency BI dashboarding will find Snowflake’s model delivers a superior cost-to-performance ratio. By provisioning a dedicated virtual warehouse, you secure predictable performance for a fixed cost, eliminating the risk of runaway query charges.

Modeling Real-World Workloads

Let’s apply concrete data points to these scenarios.

Scenario 1: Bursty Ad-Hoc Analytics

- Workload Profile: A data science team runs 10-15 complex, exploratory queries daily. These queries scan multiple terabytes of data in short, intense bursts.

- Why BigQuery Wins Here: The on-demand pricing directly mirrors this pattern. You pay only for queries executed, eliminating costs for idle compute. This is highly efficient for exploratory work.

- The Snowflake Challenge: To handle peak demand, you would need to provision a large virtual warehouse that would sit idle for most of the day, incurring costs. Automating the suspension and resumption of the warehouse adds operational overhead.

For bursty, ad-hoc query workloads, Google BigQuery delivers superior cost-efficiency. Its on-demand pricing is structured to handle this pattern effectively. Cost modeling shows that for lighter, intermittent workloads, BigQuery can be 20-30% more cost-effective than a continuously running Snowflake warehouse.

Scenario 2: Consistent Enterprise BI

- Workload Profile: A large corporation with thousands of users accessing BI dashboards 24/7. These dashboards are refreshed constantly, powered by hundreds of concurrent queries.

- Why Snowflake Wins Here: A properly sized virtual warehouse provides predictable costs and guaranteed performance. You pay a fixed hourly rate for a known quantity of compute, ideal for this stable, high-volume workload.

- The BigQuery Challenge: On-demand pricing would become prohibitively expensive with this query volume. BigQuery’s flat-rate slots are the correct solution here, but the entry-level commitment is a significant investment that requires careful capacity planning.

Operational costs are also a factor. With Snowflake, active cost management is required. For a detailed guide, see our analysis on effective Snowflake cost optimization. BigQuery’s main “hidden” costs are data egress fees when moving data outside GCP and the high entry point for its flat-rate commitments.

Ecosystem & Vendor Lock-In: A Strategic Crossroads

Choosing a data platform is an investment in its entire ecosystem. For Enterprise Architects, the BigQuery vs. Snowflake decision is a strategic choice about operational flexibility versus the long-term risk of vendor lock-in.

Snowflake’s primary strategic advantage is its multi-cloud native architecture. It runs consistently across AWS, Azure, and GCP, providing the freedom to choose your cloud provider or implement a multi-cloud strategy without being tied to a single vendor’s stack. This flexibility is a powerful risk mitigation tool.

Best-of-Breed Freedom vs. All-in-One Efficiency

The decision hinges on whether your organization prioritizes a best-of-breed, multi-cloud approach or the tight integration of a single-vendor ecosystem.

-

Snowflake: The Multi-Cloud Champion: Snowflake’s partner network is extensive and cloud-agnostic. It has mature, deep integrations with leading BI tools like Tableau and Power BI, regardless of the underlying cloud. According to DataEngineeringCompanies.com’s analysis of 86 data engineering firms, specialists in multi-cloud deployments consistently recommend Snowflake for its interoperability.

-

BigQuery: The GCP Powerhouse: Google BigQuery is the core of the Google Cloud data stack. Its native, out-of-the-box integration with services like Vertex AI, Looker, and Google Cloud Storage creates a highly efficient, low-friction environment. If your organization is standardized on GCP, this integrated ecosystem provides performance and productivity gains that are difficult to replicate.

Key Insight: The choice is strategic. Snowflake provides an insurance policy against vendor lock-in via its multi-cloud design. BigQuery offers unmatched efficiency and integration for organizations committed to the Google Cloud Platform. This trade-off between flexibility and a streamlined stack is a critical factor in the BigQuery vs. Snowflake decision.

Your existing cloud strategy and tolerance for vendor dependency are the deciding factors. An enterprise with a multi-cloud strategy will value Snowflake’s flexibility. A GCP-centric organization will realize far greater value from the deep, native integrations that BigQuery offers.

Enterprise Data Governance and Security

In regulated industries like finance and healthcare, data governance and security are not features; they are foundational requirements. The BigQuery vs. Snowflake decision here hinges on which platform’s security model aligns with your compliance framework and operational capacity. Both platforms offer enterprise-grade security, but through different implementation models.

Snowflake is known for its mature, built-in governance features. It provides granular, object-level control managed directly within the data warehouse.

- Role-Based Access Control (RBAC): Snowflake provides a sophisticated hierarchy for user permissions, essential for managing large, complex teams.

- Column-Level Security: You can restrict access to specific columns within a table, for instance, to prevent analysts from viewing personally identifiable information (PII).

- Dynamic Data Masking: This feature redacts sensitive data on-the-fly based on user roles, eliminating the need to create and manage separate, masked data copies.

For many organizations, these self-contained features are more intuitive and faster to implement out-of-the-box.

Implementation and Ecosystem Differences

BigQuery anchors its security in the unified Google Cloud Identity and Access Management (IAM) framework. This is a significant advantage for organizations standardized on GCP, as it provides a single, consistent model for managing permissions across all services.

BigQuery achieves its comprehensive security posture through deep integration with other GCP services.

- VPC Service Controls: This feature creates a secure perimeter around projects to prevent data exfiltration, a primary concern for any CISO.

- Google Cloud Dataplex: This intelligent data fabric centralizes data discovery, metadata management, and policy enforcement across data in BigQuery, Google Cloud Storage, and other sources.

Key Insight: The difference is the implementation model. Snowflake offers a self-contained, data-centric governance toolkit that is often faster to deploy. BigQuery leverages the broader, deeply integrated GCP ecosystem. This is extremely powerful but requires configuring multiple services to achieve the same fine-grained control that Snowflake provides natively.

Adherence to modern Data Security Concepts is non-negotiable. Your decision will depend on whether you prefer Snowflake’s all-in-one governance toolbox or BigQuery’s integrated, ecosystem-wide security architecture.

Frequently Asked Questions

When engineering leaders compare BigQuery and Snowflake, several key questions consistently arise. Here are direct, evidence-based answers.

Is Snowflake Always More Expensive Than BigQuery?

No. Total cost of ownership (TCO) is entirely dependent on your specific workloads.

Snowflake is often more cost-effective for predictable, high-concurrency BI workloads. A fine-tuned virtual warehouse provides a fixed cost to serve many users efficiently. Conversely, BigQuery’s on-demand model is frequently cheaper for sporadic, heavy ad-hoc queries, as you only pay for the data processed at the moment of the query.

A dashboard that refreshes every five minutes for thousands of users will be more economical on a correctly sized Snowflake warehouse than it would be running thousands of individual BigQuery scans.

How Difficult Is It to Migrate Between Platforms?

Migrating between BigQuery and Snowflake is a major engineering project, not a simple “lift and shift.” The process is complex and requires specialized expertise.

Key challenges include:

- SQL Dialect Translation: While both use ANSI SQL, they have proprietary functions and syntax. Existing queries and scripts require manual translation.

- Proprietary Features: Code built on platform-specific features (e.g., Snowflake Streams, BigQuery ML) requires complete re-architecture.

- Data Egress Costs: Moving petabytes of data out of a cloud provider incurs significant egress fees, which must be factored into the migration budget.

Given this complexity, engaging a data engineering consulting partner is standard practice to manage the migration and mitigate project risk.

Which Platform Is Better for Real-Time Analytics?

For true real-time use cases, BigQuery has a native advantage in simplicity and capability. Its architecture was designed for streaming ingestion from day one, featuring a native streaming ingestion API that makes data available for query with very low latency, without requiring micro-batching.

Key Insight: While Snowflake’s Snowpipe and Dynamic Tables deliver near-real-time performance, BigQuery’s serverless, fully managed streaming API provides a more direct and lower-latency path for building real-time analytics pipelines.

Next Steps: Platform Selection and Implementation

The choice between BigQuery and Snowflake is a strategic decision based on your workload patterns, cloud strategy, and team skills.

Use this final checklist to guide your decision:

-

Workloads and Concurrency:

- Lean towards Snowflake if: Your primary use case is enterprise BI with high concurrency, requiring predictable query performance and strict workload isolation.

- Lean towards BigQuery if: Your workloads are unpredictable, event-driven, or involve heavy ML tasks where a serverless, auto-scaling model provides a significant operational advantage.

-

Cloud Strategy and Cost Model:

- Lean towards Snowflake if: You are implementing a multi-cloud strategy and need to avoid vendor lock-in. Your financial model benefits from predictable costs tied directly to compute performance.

- Lean towards BigQuery if: You are a GCP-native organization. The operational simplicity and pay-per-query model of a serverless platform align with your goals, especially for sporadic, large-scale queries.

Selecting the platform is the first step. Execution is the challenge. According to our analysis of 86 data engineering firms, organizations that engage an expert implementation partner accelerate project timelines by 30-40% and avoid the costly rework that plagues many data platform migrations.

A qualified partner validates your architecture, models your costs accurately, and builds the governance foundation to ensure your data warehouse delivers long-term value. They de-risk the migration, manage the implementation, and upskill your team, turning a complex technical decision into a clear business win.

Finding the right implementation partner is just as critical as choosing the right platform. DataEngineeringCompanies.com offers independent, data-driven rankings of top data engineering consultancies to help you select a partner with confidence. Use our free tools to build your vendor shortlist and accelerate your data platform modernization.

Data-driven market researcher with 20+ years in market research and 10+ years helping software agencies and IT organizations make evidence-based decisions. Former market research analyst at Aviva Investors and Credit Suisse.

Previously: Aviva Investors · Credit Suisse · Brainhub · 100Signals

Top Data Engineering Partners

Vetted experts who can help you implement what you just read.

Related Analysis

A Practical Guide to Hiring Data Governance Consultants

Hiring data governance consultants? This guide unpacks their roles, costs, and selection criteria to help you find the right partner for your modern data stack.

Your Guide to Data Engineering Consulting Services

Unlock the value of your data. This guide to data engineering consulting services covers costs, vendor selection, red flags, and platform-specific insights.