A CTO's Guide to Ecommerce Data Engineering

As an engineering leader, your job is to turn the flood of raw data from platforms like Shopify, Google Analytics, and your ERP into a cohesive system that drives revenue. A successful ecommerce data engineering strategy powers true personalization, nails inventory forecasting, and predicts customer behavior. It is the technical foundation for competitive advantage.

Architecting Your Ecommerce Data Platform

Your data architecture is the framework that dictates how you collect, store, and use data. The choices made here directly enable or block capabilities like dynamic pricing, real-time fraud detection, and supply chain optimization. Ecommerce companies invest heavily in this area for a reason: merging clickstream data with point-of-sale records and customer support chats to build sophisticated recommendation engines requires sub-second query performance and a modern data stack.

Core Architectural Decisions: Warehouse vs. Lakehouse

Your first decision is the architectural pattern for your central data platform. This choice defines your team’s workflows and analytical capabilities.

- Data Warehouse: A traditional, highly structured repository ideal for predictable data like sales figures and customer lists. It is a powerful and familiar environment for analytics teams reliant on SQL for BI and reporting.

- Data Lakehouse: A hybrid model that combines the flexibility of a data lake with the management features of a warehouse. It handles structured, semi-structured, and unstructured data, making it the clear choice for advanced AI and machine learning tasks like analyzing customer review sentiment or processing product images.

For most growing ecommerce businesses, a Lakehouse architecture (built on Databricks or Snowflake) is the most forward-looking choice. It handles all standard BI needs while providing the foundation for future machine learning applications. A coherent first-party data strategy is a prerequisite for success.

A strong technical design must be mapped to core business domains—marketing, fulfillment, customer service—to ensure the platform directly serves critical business needs. Our guide to retail data engineering covers this domain-driven approach in detail.

Mastering Core Data Source Integrations

An ecommerce data platform is only as good as its data integrations. According to DataEngineeringCompanies.com’s analysis of 86 data engineering firms, wrangling disparate data sources is the single biggest hurdle for mid-market retailers. The modern ecommerce stack is a sprawling collection of tools, and the constant pain of API changes (schema drift) and legacy system quirks forces teams into costly cycles of fixing broken pipelines.

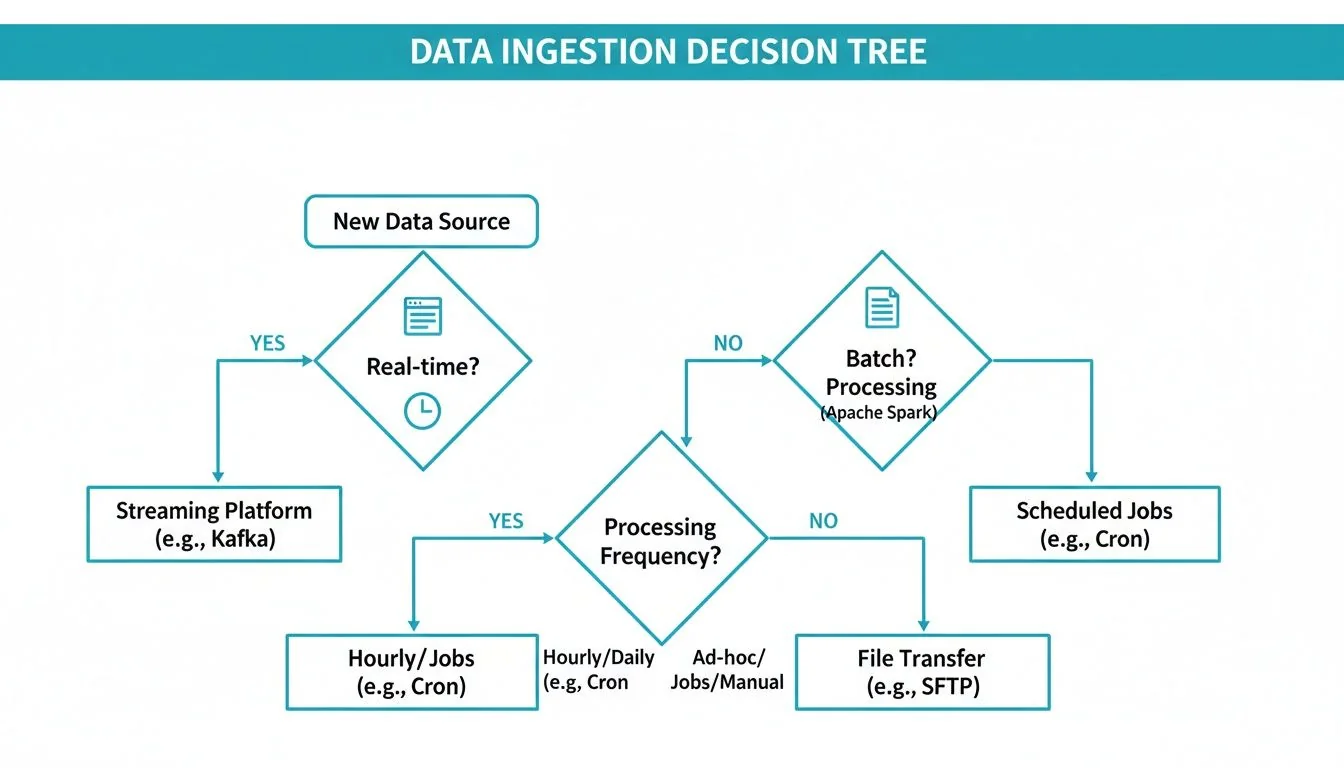

Ingestion Patterns: Batch vs. Streaming

The choice between batch and streaming ingestion depends on how quickly the business needs to act on the information.

- Batch Ingestion: Data is collected and moved in scheduled intervals (e.g., hourly, daily). It is reliable and cost-effective for information that does not require immediate action. Tools like Fivetran and Airbyte excel here.

- Use Cases: Daily sales summaries, ERP inventory syncs, pulling order histories from Shopify or Magento (Adobe Commerce).

- Streaming Ingestion: Data flows event-by-event in near real-time. This approach is more complex to implement but essential for use cases requiring instant reaction. This is the domain of tools like Apache Kafka and Amazon Kinesis.

- Use Cases: Real-time fraud detection, personalizing on-site user experience, analyzing clickstream events from Segment.

Preventing Pipeline Failures with Data Contracts

The most common cause of data pipeline failure in ecommerce is schema drift—when a source system’s API changes its data structure without warning. This leads to silent failures or, worse, corrupted data that erodes trust in analytics.

The solution is to establish data contracts: a formal, programmatic agreement between a data source and its destination that defines the expected structure, semantics, and quality of the data. By building schema validation directly into the ingestion process, any data that violates the contract is automatically flagged or quarantined instead of corrupting the system. This proactive approach distinguishes a resilient data ecosystem from a brittle, high-maintenance one. For a deeper dive, read our guide on cloud data integration strategies.

The Central Data Platform: Snowflake vs. Databricks

Choosing your central data platform is a 5-10 year strategic bet on your company’s analytics and AI direction. The decision frequently comes down to two platforms: Snowflake and Databricks. While their capabilities increasingly overlap, their core designs make them better suited for different ecommerce priorities.

Your use case dictates the technical path. Urgent needs like fraud detection require a streaming pipeline, while end-of-day sales reporting is a perfect fit for more economical batch jobs.

Snowflake for Structured Analytics

If your world revolves around SQL and delivering clean, structured data to business analysts, Snowflake is purpose-built for the task. Its architecture, which separates storage from compute, is a perfect match for the spiky query patterns of retail—quiet periods followed by massive query volumes from a marketing campaign launch.

Snowflake excels in these scenarios:

- Empowering BI and Reporting: Providing analysts with fast, reliable access to sales, marketing, and inventory data for dashboards in tools like Tableau or Power BI.

- Seamless Data Sharing: Securely sharing live inventory levels with a supplier or sales data with a marketing partner without building complex pipelines.

- Predictable BI Cost Control: For analytics-heavy workloads, paying for compute by the second is more cost-effective than running clusters 24/7.

Databricks for Advanced AI and Real-Time Data

When your roadmap includes ambitious AI projects and real-time customer experiences, Databricks is the front-runner. Built by the creators of Apache Spark, it is a powerhouse for large-scale data processing and machine learning on messy, unstructured data like product images, customer reviews, and support chats.

Databricks is the platform of choice when you need to:

- Power Real-Time Personalization: Ingesting and acting on clickstream data to update a recommendation engine on the fly requires the low-latency streaming native to the Databricks platform.

- Build Complex AI Models: Developing sophisticated fraud detection algorithms or demand forecasting models that learn from massive, varied datasets is precisely what Databricks was designed for.

- Work with Unstructured Data: Analyzing product photos for defects or sifting through support transcripts for customer sentiment are tasks where Databricks’ toolset has a distinct advantage.

Platform Comparison for Ecommerce Workloads: Snowflake vs. Databricks

This table breaks down how each platform stacks up against the criteria that matter most to ecommerce engineering leaders.

| Criterion | Snowflake | Databricks | Recommendation for Ecommerce CTOs |

|---|---|---|---|

| Primary Use Case | Business Intelligence, reporting, and analytics on structured/semi-structured data. The “single source of truth.” | Machine Learning and AI at scale, real-time data processing, and advanced analytics on all data types. | If your priority is democratizing data for business analysts, start with Snowflake. If you’re building a future on AI, lean toward Databricks. |

| Core Architecture | SQL-native Data Cloud with decoupled storage and compute. Highly optimized for SQL queries. | Lakehouse platform built on open-source Apache Spark. Unifies data warehousing and data science. | Snowflake’s architecture offers simplicity for BI. Databricks’ offers flexibility for complex data science and engineering workflows. |

| Data Types | Excels with structured (SQL tables) and semi-structured (JSON, Avro) data. Unstructured support is improving but not native. | Natively handles structured, semi-structured, and unstructured (images, text, video) data with ease. | If your roadmap includes analyzing product images, reviews, or chat logs, Databricks has a significant advantage. |

| Real-Time Capabilities | Strong batch processing and improving near-real-time with Snowpipe Streaming. | Best-in-class real-time streaming with Delta Live Tables and Structured Streaming. Built for low-latency. | For true real-time personalization or fraud detection, Databricks is the more mature and capable choice today. |

| Ease of Use for Analysts | Extremely intuitive for anyone who knows SQL. The UI is clean and focused on querying data. | Steeper learning curve. Requires knowledge of Spark, Python/Scala, and notebooks. SQL is supported but not the only focus. | Snowflake is far easier to adopt for traditional BI teams, leading to faster time-to-value for reporting. |

| Ecosystem & Interoperability | Massive partner ecosystem for BI and ETL tools. Excellent for data sharing across organizations. | Deep integration with the ML ecosystem (MLflow, Hugging Face). Open standards (Delta Lake) promote flexibility. | Your choice depends on whether your key partners are in the BI world (Tableau, Fivetran) or the AI world (PyTorch, TensorFlow). |

There is no single “best” platform—only the best platform for your strategy. Snowflake provides a faster path to value for robust business intelligence, while Databricks offers a higher ceiling for innovation in AI and real-time applications.

Implementing Data Governance and Observability

In ecommerce, bad data directly impacts revenue. A pricing error, an out-of-stock item showing as available, or a GDPR violation causes immediate financial damage and erodes customer trust. Data governance and observability are not optional; they are the foundation of a serious data practice.

Effective governance is about creating automated guardrails to ensure data is reliable, secure, and understandable. Without this trust, your analytics team will spend their time questioning numbers instead of uncovering insights.

Establishing a Foundation of Trust

The first step is establishing a single source of truth for metadata with a data catalog. Tools like Atlan or Alation automatically trace data lineage, showing exactly where a metric like “Average Order Value” originates and every transformation it undergoes. This transparency is a game-changer for debugging and ensuring stakeholder buy-in.

The next step is to automate data quality as part of the development process by baking tests directly into data transformation logic. Integrating data quality tests into your dbt models stops you from cleaning up messes and starts preventing them. A simple test can automatically flag an unusual spike in “orders per hour” or a sudden drop in product prices, preventing bad data from contaminating BI dashboards.

Proactive Monitoring with Data Observability

While quality tests catch known problems, observability platforms spot the “unknown unknowns.” As you build your governance framework, evaluate data quality monitoring tools to find the right fit for your critical ecommerce datasets.

Platforms like Monte Carlo or Metaplane monitor the health of your data ecosystem by tracking key metrics:

- Freshness: Is inventory data updating every 15 minutes as expected?

- Volume: Did the customer event stream from our website suddenly drop to zero?

- Schema: Did a Shopify API update add a new field and break a downstream model?

Automated alerts for these indicators allow engineers to fix issues before business teams are even aware of a problem. This moves the data practice from a reactive state of firefighting to a disciplined, automated operation.

How To Evaluate a Data Engineering Partner

Choosing a data engineering consulting partner is a high-stakes decision. A wrong choice leads to blown budgets, project delays, and a brittle data stack that creates technical debt for years. Vetting must be rigorous, cutting through sales presentations to get to verifiable proof of expertise. Does the firm have hands-on experience with the Shopify API or real-time event streams from Segment? You need to see evidence.

Beyond the Technical Checklist

Technical skill is table stakes. The best partners act as strategic advisors, challenging assumptions and bringing battle-tested delivery processes. A major red flag is the “bait-and-switch,” where you meet senior architects during the sales process, but the proposal is staffed with junior talent. This signals their A-team will not be building your project.

To avoid these traps, use a structured scorecard to compare potential partners objectively.

Data Engineering Partner Evaluation Checklist

This checklist, based on the proprietary methodology used at DataEngineeringCompanies.com to rank consulting firms, provides a starting point for your own evaluation scorecard. Use it to structure your RFP and guide vendor conversations. Assign a weight from 1 (nice-to-have) to 5 (mission-critical) to each criterion to generate a quantitative score for a true side-by-side comparison.

| Category | Evaluation Criterion | Weight (1-5) | Assessment Notes |

|---|---|---|---|

| Ecommerce Expertise | Proven projects with Shopify, Magento, or BigCommerce APIs. | 5 | Ask for specific, referenceable case studies. |

| Ecommerce Expertise | Experience building marketing attribution & LTV models. | 5 | Do they understand CAC, ROAS, and multi-touch attribution? |

| Ecommerce Expertise | Deep understanding of ecommerce data sources (orders, events, catalog). | 4 | Can they map out a typical ecommerce data schema from memory? |

| Technical Depth | Certified experts in your chosen platform (Snowflake/Databricks). | 5 | Verify current certifications for the proposed team. |

| Technical Depth | Demonstrable experience with modern transformation tools like dbt. | 4 | A modern data practice without dbt is a major concern. |

| Technical Depth | Expertise in both batch and real-time ingestion patterns. | 4 | How would they handle a nightly product sync and a live clickstream? |

| Delivery Methodology | Clear, agile project management and communication plan. | 4 | How will they handle scope changes and report progress? |

| Delivery Methodology | Documented best practices for code, testing, and deployment. | 4 | Ask to see their internal standards or a sanitized example. |

| Team Composition | Senior architect and engineers named and guaranteed on the project. | 5 | Get resumes and insist on interviewing the key team members. |

| Team Composition | Evidence of low team turnover. | 3 | High turnover can derail a long-term project. |

| Strategic Fit | Acts as a strategic advisor, not just an order-taker. | 5 | Did they challenge your assumptions or suggest better approaches? |

| Strategic Fit | Transparent knowledge transfer and training plan for your team. | 4 | How will they ensure your team can own the platform long-term? |

| Cost & Transparency | Transparent, role-based rate benchmarks (e.g., Architect: $225/hr, Lead: $190/hr). | 4 | Compare their rates to market data from objective sources. |

| References & Reputation | Positive, relevant, and recent client references you can speak with. | 5 | Ask to speak with a reference from a project similar to yours. |

| Security & Compliance | Documented security policies and data handling procedures. | 4 | How do they ensure the security of your sensitive customer data? |

This checklist is about finding a partner, not just a vendor. The right firm delivers a solid platform and makes your internal team more capable in the process.

Frequently Asked Questions

These are the most common questions from engineering leaders building an ecommerce data capability, with direct, evidence-based answers.

What Are the First Three Data Pipelines an Ecommerce Business Should Build?

Focus on pipelines with the biggest and fastest impact. Get these three right to build a solid foundation.

- Single Source of Truth for Revenue: Your first pipeline must unify order data from platforms like Shopify with payment details from gateways like Stripe. This becomes the undisputed source for all sales reporting, LTV models, and core financial analysis.

- Marketing Attribution: Next, pull in data from ad platforms (Google Ads, Facebook Ads) and web analytics tools like Google Analytics. This pipeline builds a real attribution model to answer the critical question: where are our best customers coming from, and what is our true customer acquisition cost?

- Inventory and Catalog Sync: Finally, build a pipeline that syncs your product catalog with real-time inventory levels from your ERP or warehouse management system (WMS). This prevents overselling, a mistake that erodes customer trust and creates operational headaches.

How Do I Justify the Cost of a Modern Data Stack to My CFO?

Frame the investment around three concrete business outcomes, not tech jargon.

- Revenue Lift: A modern data stack is a revenue-generation engine. Real-time personalization and recommendation features consistently boost average order value (AOV) by 5-15%. Model what that lift means for your top-line revenue.

- Operational Efficiency: Calculate the hours your marketing and finance teams spend manually compiling reports. A modern stack automates this work. The saved hours, multiplied by average salary, represent a clear operational cost saving. A typical project timeline to achieve this is 4-6 months.

- Risk Mitigation: Advanced analytics can detect and prevent the 1-2% of revenue that online retailers typically lose to fraud. A robust data governance framework is also insurance against the significant fines associated with regulations like GDPR and CCPA.

Should I Build Our Data Platform Internally or Hire a Consultant?

The decision is a classic build-vs-buy dilemma that hinges on your team’s skills and the business’s need for speed.

- Hire a consultant if: speed is the top priority. An experienced partner can deliver a production-ready platform on Snowflake or Databricks in 3-6 months. An internal team learning and building simultaneously could take over a year.

- Build internally if: you have time and the primary goal is to develop deep technical expertise in-house. This approach is slower but builds a long-term strategic asset.

A hybrid model is often the best approach: hire a consultant to design the architecture and train your team, then transition ownership to your internal engineers for ongoing development.

What Are the Most Common Pitfalls in an Ecommerce Data Migration?

The biggest and most painful data migration mistakes are almost always the same and are completely avoidable.

- Underestimating Data History: Teams focus on the new system and forget the complexity of the old one. They fail to plan for years of schema changes, messy manual data entry, and evolving business rules. This “data archaeology” work always takes longer than estimated.

- Ignoring Business Users: It’s easy to get lost in technical details. If you launch a new platform without rebuilding the key dashboards that finance and marketing depend on daily, you will disrupt the business and destroy stakeholder trust.

- Hiring a Generalist for a Specialist’s Job: Do not hire a data firm that doesn’t live and breathe ecommerce. If they do not understand the specific complexities of clickstream event data, order status changes, or multi-touch attribution, they will build a flawed system that requires a complete rebuild.

At DataEngineeringCompanies.com, we provide independent rankings and tools to help you evaluate and choose a firm with confidence. Find your ideal data engineering partner today.

Data-driven market researcher with 20+ years in market research and 10+ years helping software agencies and IT organizations make evidence-based decisions. Former market research analyst at Aviva Investors and Credit Suisse.

Previously: Aviva Investors · Credit Suisse · Brainhub · 100Signals

Top Data Engineering Partners

Vetted experts who can help you implement what you just read.

Related Analysis

How to Hire an Apache Kafka Consulting Firm That Delivers

A guide for engineering leaders on scoping, vetting, and hiring the right Apache Kafka consulting partner. Learn to avoid pitfalls and maximize your ROI.

A CTO's Guide To Real-Time Data Pipeline Architecture

Build a winning real-time data pipeline architecture. This guide helps engineering leaders compare patterns, choose components, and select the right partner.

A Practical Guide to Modern Data Pipeline Architecture

Discover how a modern data pipeline architecture can transform your business. This practical guide covers key patterns, components, and vendor selection.