How to Hire an Apache Kafka Consulting Firm That Delivers

Hiring an Apache Kafka consulting firm is a high-stakes decision. You do it when a DIY implementation is failing, a critical AI initiative demands flawless real-time data, or a migration from a legacy messaging system cannot fail. The right partner de-risks these complex projects and accelerates your time to market. The wrong one burns budget and leaves you with a fragile, unscalable platform.

This guide provides an evidence-based framework for engineering leaders to select, scope, and manage an Apache Kafka consulting engagement that delivers measurable business value, not just a deployed cluster.

When to Engage an Apache Kafka Consulting Partner

Engaging a Kafka expert is a strategic decision, not a tactical one. The decision is similar to bringing in specialized consulting services for complex technologies like Kubernetes. Pinpointing the right moment is critical. The need becomes undeniable when specific technical or business challenges emerge.

Key Triggers for Seeking Expert Help

Failed or Struggling DIY Implementation: This is the most common trigger. A talented team builds a proof-of-concept, but underestimates Kafka’s operational complexity at scale. The initial success in a development environment crumbles under production load.

The symptoms are consistent:

- Increasingly frequent outages as data volume and velocity grow.

- Persistent consumer lag that delays or breaks real-time data processes.

- Throughput bottlenecks that the internal team cannot diagnose or resolve.

New AI and Machine Learning Initiatives: These projects require reliable, low-latency data streams for model training, feature engineering, and real-time inference. If your existing data infrastructure cannot guarantee the required data quality and speed, an expert can architect a platform that meets these stringent demands.

The breaking point for many organizations is when their best senior engineers spend more time firefighting Kafka than building revenue-generating features. This indicates the true cost of self-management has spiraled out of control.

Migration from Legacy Systems: Moving from aging platforms like RabbitMQ or ActiveMQ is a high-risk project perfectly suited for expert guidance. Consultants possess proven playbooks for planning and executing these migrations, ensuring data integrity and minimizing downtime.

The Business Case for Consulting

The ROI extends beyond a stable cluster. A well-designed Kafka platform unlocks significant business value. Audacy, for example, reported up to 40% faster feature development after optimizing its Kafka environment.

An expert engagement transforms Kafka from a technical liability into a strategic asset that underpins critical business functions. For more context on the strategic decision, our guide on choosing a streaming data platform provides a valuable framework.

Defining Project Scope to Ensure Accountability

Ambiguous project scope is the primary cause of failed consulting engagements. Vague goals like “build a Kafka cluster” lead to platforms that are technically functional but fail to solve the underlying business problem. To hold an Apache Kafka consulting partner accountable, you must define success with business-oriented metrics, not just technical uptime.

For example, instead of “improve performance,” a precise goal is “reduce end-to-end data pipeline latency by 50% for the real-time fraud detection service.” This is a measurable outcome.

Key Scoping Dimensions

A robust project brief must quantify the following dimensions. Ambiguity here introduces unacceptable risk to your timeline and budget.

-

Data Volume and Velocity: Specify peak messages per second, average message size, and projected data growth over the next 18-24 months. A clear target like “support a 2x increase in event volume without re-architecting” provides a concrete scalability challenge.

-

Latency Requirements: Be specific. Is sub-100ms latency a non-negotiable requirement for a customer-facing feature, or can an internal analytics pipeline tolerate a multi-second delay? This decision dramatically impacts architecture, hardware selection, and cost.

-

Consumer and Producer Complexity: Document every application that will interact with Kafka. The number and nature of these clients—from simple microservices to complex event processing engines—directly inform cluster design and configuration.

-

Critical Integration Points: Map out how Kafka will connect to the broader ecosystem. This includes data warehouses like Snowflake, databases like MongoDB, and processing platforms like Databricks. This is especially critical for complex projects like a Kafka migration from VMs to Kubernetes.

A strong Statement of Work (SOW) is your primary defense against scope creep and budget overruns. It must detail not just what the consultant will deliver, but also what specific business capabilities it will enable.

By defining these parameters, you are not buying a technical task; you are defining a strategic investment. This forces the consulting partner to engineer a solution for your business, not deliver a generic deployment. You are buying an outcome, not just a technology.

Vetting a Kafka Consultant’s Technical Depth

Once scope is defined, you must validate a potential partner’s technical expertise. This due diligence process separates true Apache Kafka specialists from generalists. It involves probing their real-world experience with the complex, non-obvious problems that arise in any significant Kafka deployment.

According to DataEngineeringCompanies.com’s analysis of 86 data engineering firms, the ability to cite specific, high-throughput project metrics is the single greatest differentiator. Vague answers are a major red flag.

Gauging Expertise on Cluster Design and Performance

Anyone can stand up a default Kafka cluster. An expert architects and tunes it for a specific workload. You are looking for an understanding of trade-offs, not textbook answers.

Pose these questions and analyze the nuance in their response:

- How do you determine the partition count for a high-volume topic with spiky, unevenly distributed data?

- Walk me through troubleshooting a persistent consumer lag problem. What specific tools and metrics do you use to find the root cause?

- What is your framework for allocating broker resources (CPU, memory, I/O) to prevent bottlenecks in a multi-tenant cluster?

Listen for discussions of partition strategy, keying, client-side batching, and compression before they suggest adding hardware.

A classic sign of inexperience is when a consultant’s first solution to a performance issue is “add more brokers.” A seasoned expert diagnoses the underlying configuration and workload patterns first, viewing hardware as a last resort.

Probing Knowledge of Security and Resilience

A high-performance cluster is worthless if it is not secure and resilient. A partner’s approach to disaster recovery (DR) and security reflects their operational maturity.

Push on these critical areas:

- Disaster Recovery: Ask them to compare multi-datacenter replication strategies, such as MirrorMaker 2 versus a commercial tool like Confluent Replicator. What are the specific pros and cons they have encountered?

- Schema Governance: How do they leverage a tool like Confluent Schema Registry to manage schema evolution? Present a scenario: a developer attempts to push a backward-incompatible schema change. How do they prevent this from breaking downstream consumers?

- Hardening the Cluster: Ask for their methodology for implementing end-to-end encryption (TLS), authentication (SASL), and authorization (ACLs). A strong follow-up is how they balance tight security controls with performance overhead.

Kafka Consulting Partner Evaluation Checklist

Use this checklist to structure your technical due diligence and ensure you cover all critical evaluation criteria before making a hiring decision.

| Evaluation Category | Key Criteria | Evidence to Request |

|---|---|---|

| Architectural Depth | Understands trade-offs in cluster topology, partitioning, and replication for different use cases (e.g., logging vs. transactions). | Whiteboard a proposed architecture for one of your key use cases. Ask for a past project architecture diagram (anonymized). |

| Performance Tuning | Proven experience diagnosing and resolving bottlenecks related to brokers, clients, and network. Knows key metrics inside and out. | A detailed story of a past performance issue they solved, including the metrics used and configs changed. |

| Operational Maturity | Can design and implement robust monitoring, alerting, and disaster recovery strategies. Experience with Infrastructure as Code (IaC). | Sample monitoring dashboard screenshots (anonymized). Their philosophy on alerting (signal vs. noise). |

| Security Expertise | Practical knowledge of implementing Kafka’s security features (TLS, SASL, ACLs) in a production environment without killing performance. | Explain how they would secure a multi-tenant cluster. Ask about their experience with tools like HashiCorp Vault for secrets management. |

| Ecosystem Knowledge | Familiarity with tools beyond core Kafka, such as Kafka Connect, ksqlDB, Schema Registry, and various client libraries. | Ask which Kafka Connect connectors they have the most experience with and what common “gotchas” to watch for. |

This checklist provides a solid foundation for your technical interviews. For a more comprehensive framework, see our guide on how to evaluate data engineering vendors. This upfront diligence is the best way to secure a partner who can build a Kafka platform that is powerful, secure, scalable, and resilient.

Analyzing Kafka Consulting Costs and Engagement Models

Understanding the financial aspects of a Kafka consulting engagement is as critical as the technical vetting. The total cost is a function of engagement structure, project complexity, and consultant seniority.

Demand for this expertise is immense. The software consulting market research shows a projection to reach USD 1,143.30 billion by 2034, with a significant portion driven by real-time data needs. With over 150,000 organizations running Kafka, expert guidance is in high demand.

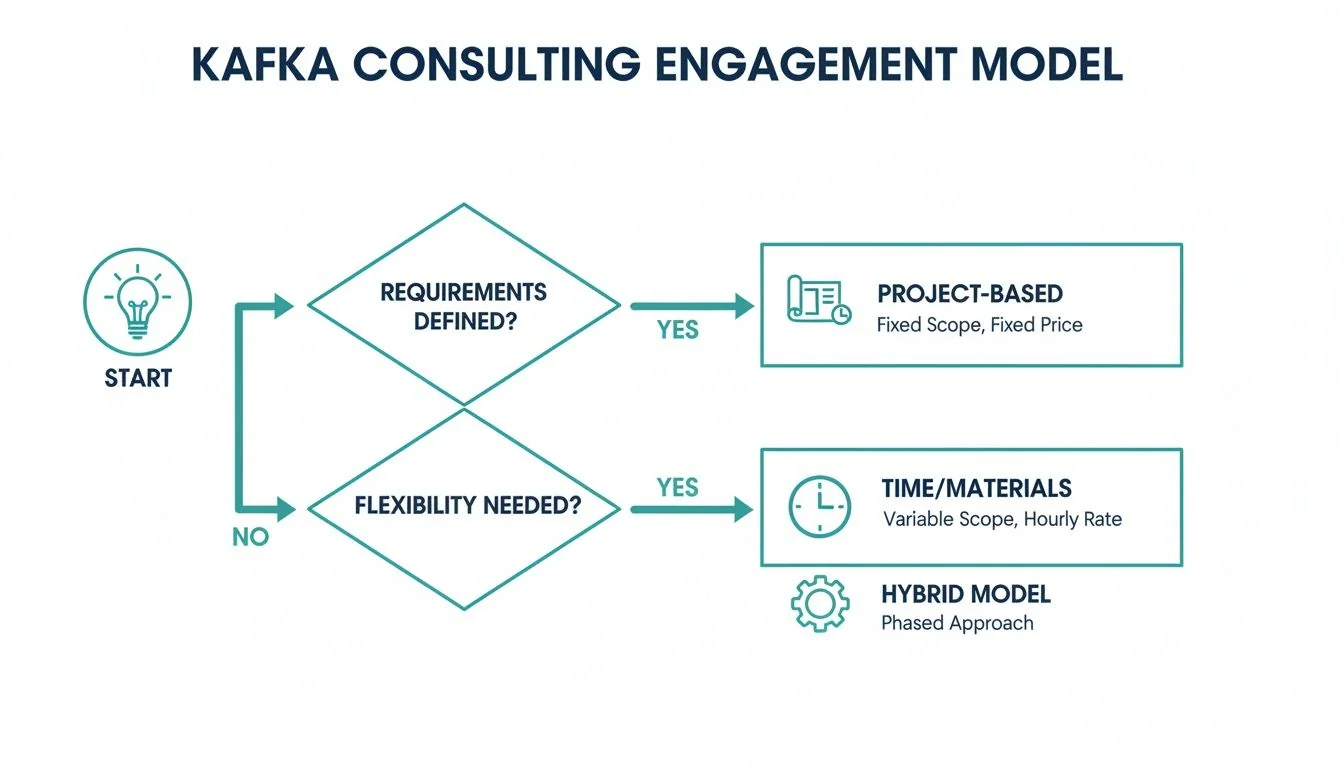

Common Engagement Models

The structure of the engagement dictates budget, risk profile, and management overhead. Most fall into three categories:

-

Project-Based (Fixed-Bid): A fixed price for a well-defined scope and set of deliverables. This model is ideal for greenfield projects or migrations where the outcome is unambiguous. The primary advantage is that it shifts delivery risk to the consulting firm. It requires an iron-clad SOW to prevent disputes.

-

Time & Materials (T&M): You pay for consultant time at an hourly or daily rate. This model provides maximum flexibility, making it suitable for advisory roles, complex performance tuning, or projects with evolving requirements. It offers more control but places budget risk on the client if scope is not managed tightly.

-

Managed Services: A long-term retainer where the consulting firm assumes full operational responsibility for running, maintaining, and optimizing your Kafka platform. This is for organizations that want their internal teams focused on core product development, not data infrastructure management.

Our analysis of 86 data engineering firms at DataEngineeringCompanies.com shows that a blended T&M rate is the starting point for over 60% of Kafka contracts. A common pattern is to begin with T&M for discovery and architecture before transitioning to a fixed-bid contract for the implementation phase.

Rate Bands and Cost Drivers

The final price is driven by cluster scale, integration complexity, and consultant seniority. A seasoned Kafka Architect with multi-datacenter replication and security expertise commands a higher rate than a junior engineer implementing standard connectors.

Expect a wide range of rates. Here are realistic benchmarks for US-based talent:

- Kafka Architects: $200 – $350 per hour

- Senior Kafka Engineers: $150 – $225 per hour

Offshore resources can reduce these rates by 40-60%, but this must be weighed against the real costs of communication overhead and time-zone friction.

Red Flags to Spot When Hiring Kafka Consultants

Hiring the wrong Apache Kafka consultant is worse than hiring no one. A bad engagement wastes budget, consumes internal team resources, and creates technical debt that can take years to unwind. Recognizing warning signs during the evaluation process is your best defense.

A consultant who provides vague, high-level answers is the first major red flag. When discussing performance tuning or disaster recovery, an expert will speak in terms of specific trade-offs and scenarios. If their default response is simply “we’ll use the cloud provider’s managed service” without analyzing its limitations for your specific workload, they lack hands-on, battle-tested expertise.

Unproven Experience and Vague Promises

A portfolio of case studies that do not approach your project’s scale is another clear warning. A firm that has only built small-scale clusters with a few brokers and low-volume topics is not equipped for the complexities of a high-throughput, mission-critical system.

Demand hard numbers from their past projects:

- What was the peak message throughput?

- What were the p99 latency figures they achieved?

- How large were the clusters they managed (broker and node count)?

Pay close attention to the proposed team composition. A proposal staffed primarily with junior engineers, with a senior architect providing only “nominal oversight,” is a recipe for failure. You are paying for expert guidance, which requires deep, hands-on involvement from the architect in the design and key implementation phases.

A consultant’s inability to articulate a clear, opinionated strategy for schema management is a dealbreaker. If they do not have a strong point of view on using tools like Schema Registry to enforce data contracts and prevent data chaos, they are not prepared for an enterprise-level engagement.

This flowchart maps the decision points for structuring an engagement, aligning the model with your project’s specific needs.

As shown, projects with a clearly defined scope are well-suited for a fixed-bid model. In contrast, advisory roles or projects with evolving requirements are better suited to a T&M approach.

The exploding demand for Kafka skills, highlighted in recent Confluent earnings reports, means more firms are entering the market. This makes rigorous vetting more critical than ever. Do not partner with a firm that cannot substantiate its claims with hard data and concrete architectural patterns.

Frequently Asked Questions

As an engineering leader, you must ask tough questions to de-risk a significant investment in Apache Kafka consulting. Here are direct answers to the most common ones.

When should we use an open-source Kafka consultant vs. a Confluent partner?

This decision depends on your team’s existing capabilities and strategic goals.

Choose a pure open-source Kafka consultant if your team has strong DevOps expertise, you want to avoid vendor lock-in, and you have highly specific customization needs that managed platforms cannot meet. This path is for mature engineering organizations tackling unique architectural challenges.

Choose a Confluent partner when your primary goal is to accelerate deployment with enterprise-grade tooling, guaranteed support, and reduced operational overhead. This is the optimal choice for teams that want to leverage powerful features like ksqlDB and Schema Registry without assuming the full operational burden, especially when robust security and governance are required from day one.

The trade-off is clear: total control and customization (open-source) versus accelerated deployment with an enterprise safety net (Confluent).

What is a realistic timeline for a Kafka consulting engagement?

Be skeptical of any consultant promising a full production deployment in under a month. Such projects almost always result in significant technical debt.

A realistic timeline for a moderately complex system includes distinct phases:

-

Architecture & Design (4–6 weeks): This foundational phase involves deep discovery, load projection for cluster sizing, and drafting a detailed technical architecture. Rushing this step is the single most common and costly mistake.

-

Implementation & MVP (12–16 weeks): This phase includes infrastructure deployment, developing the initial producer and consumer applications with your team, and establishing essential monitoring and alerting.

A full-scale enterprise migration from a legacy messaging system requires a longer timeline, typically six to nine months, depending on the number of data sources and sinks.

How do I measure the ROI of a Kafka consulting engagement?

Measuring success by “deployment complete” is insufficient. To calculate the true return on investment, you must track tangible business outcomes and operational improvements.

The most compelling ROI metric is the reduction in “firefighting” time for senior engineers. When your best engineers are freed from managing a fragile cluster and can return to building revenue-generating features, that is a clear and massive win.

Track these key metrics:

- Developer Productivity: Measure the reduction in time your team spends on Kafka infrastructure management. Audacy reported a 40% improvement in engineering efficiency post-engagement.

- Time-to-Market: Measure the development cycle time for new real-time features that depend on the new Kafka platform.

- Operational Costs: Calculate the total cost of ownership (TCO) of the new system versus the old, including license fees, downtime costs, and engineering hours.

- Performance Gains: Track specific improvements in end-to-end data latency, message throughput, and overall system uptime.

Ready to find the right Apache Kafka consulting partner for your project? DataEngineeringCompanies.com provides expert rankings, verified firm profiles, and practical tools to help you build a shortlist and make your selection with confidence. Start your 60-second vendor match quiz today.

Data-driven market researcher with 20+ years in market research and 10+ years helping software agencies and IT organizations make evidence-based decisions. Former market research analyst at Aviva Investors and Credit Suisse.

Previously: Aviva Investors · Credit Suisse · Brainhub · 100Signals

Top Data Engineering Partners

Vetted experts who can help you implement what you just read.

Related Analysis

A CTO's Guide to Ecommerce Data Engineering

Build a high-performance ecommerce data engineering architecture. Compare platforms, integration patterns, and vendor selection criteria for maximum ROI.

A CTO's Guide To Real-Time Data Pipeline Architecture

Build a winning real-time data pipeline architecture. This guide helps engineering leaders compare patterns, choose components, and select the right partner.

A Practical Guide to Modern Data Pipeline Architecture

Discover how a modern data pipeline architecture can transform your business. This practical guide covers key patterns, components, and vendor selection.