Building a Scalable Manufacturing Data Engineering Solution

Manufacturing leaders are no longer debating if they should use data; they are deciding how to build the data engineering foundation to support it. Existing OT systems, MES, and ERPs generate terabytes of valuable data, but this data is fragmented across legacy systems. Without a unified, cloud-native data platform, this information remains untapped, preventing the implementation of high-ROI initiatives like predictive maintenance and AI-driven quality control.

The core challenge is not a lack of data, but a lack of robust manufacturing data engineering solutions to ingest, process, and analyze it at scale. This guide provides a direct, evidence-based framework for engineering leaders to design, budget for, and implement these solutions.

The Data Infrastructure Problem on the Factory Floor

Most manufacturers are constrained by the technical debt of their operational technology (OT) systems. SCADA, PLCs, and other shop-floor systems were engineered for operational uptime, not for data analytics. This architecture strands critical information on the factory floor, disconnected from the cloud platforms where modern analytics and AI/ML models operate.

This gap between ambition and reality forces engineering teams to maintain a web of brittle, point-to-point integrations that are impossible to scale. The solution is to replace these custom connections with a cohesive, cloud-native data platform designed for specific manufacturing business goals.

An effective architecture must support:

- Edge-to-Cloud Pipelines: Ingesting high-velocity data directly from IoT sensors and factory equipment.

- Unified Data Platforms: Consolidating OT data (from sensors, PLCs) with IT data (from ERP, CRM) into a single source of truth on a platform like Snowflake or Databricks.

- Advanced Analytics and MLOps: Building, deploying, and managing machine learning models for use cases like predictive quality control or demand forecasting.

This strategic shift treats data as a core asset, not a byproduct of production. To execute this, a data engineering foundation built for the volume, velocity, and complexity of manufacturing data is non-negotiable.

The Modern Manufacturing Data Stack

A modern data stack provides the digital backbone for data-driven manufacturing. It is a standardized framework to collect, process, and govern data across the enterprise, enabling a shift from reactive problem-solving to proactive operational optimization. Instead of diagnosing why a machine failed after an outage, this stack enables you to predict failures weeks in advance. This is the tangible value of a well-architected manufacturing data engineering solution.

Designing High-Performance Manufacturing Data Pipelines

Designing a data pipeline for a factory is not just about data movement; it’s about creating a digital nervous system for the shop floor. The architecture must handle data from a wide array of sources—from high-frequency sensor streams and PLC signals to scheduled batch files from ERP systems. The objective is to create a single, reliable flow that transforms raw, noisy data into clean, analysis-ready information.

An effective design serves two parallel functions: real-time operational needs and long-term strategic analysis.

-

Real-Time Stream Processing: This “fast lane” handles the constant torrent of data from IoT devices and machinery using tools like Apache Kafka or Apache Flink. Its purpose is to deliver immediate insights, such as triggering an alert when a machine part shows signs of overheating. Low latency is the primary requirement.

-

Scalable Batch Processing: This “wide-angle lens” processes large volumes of historical data on a set schedule. It is essential for training predictive maintenance models, running deep-dive BI queries, or analyzing quarterly production efficiency.

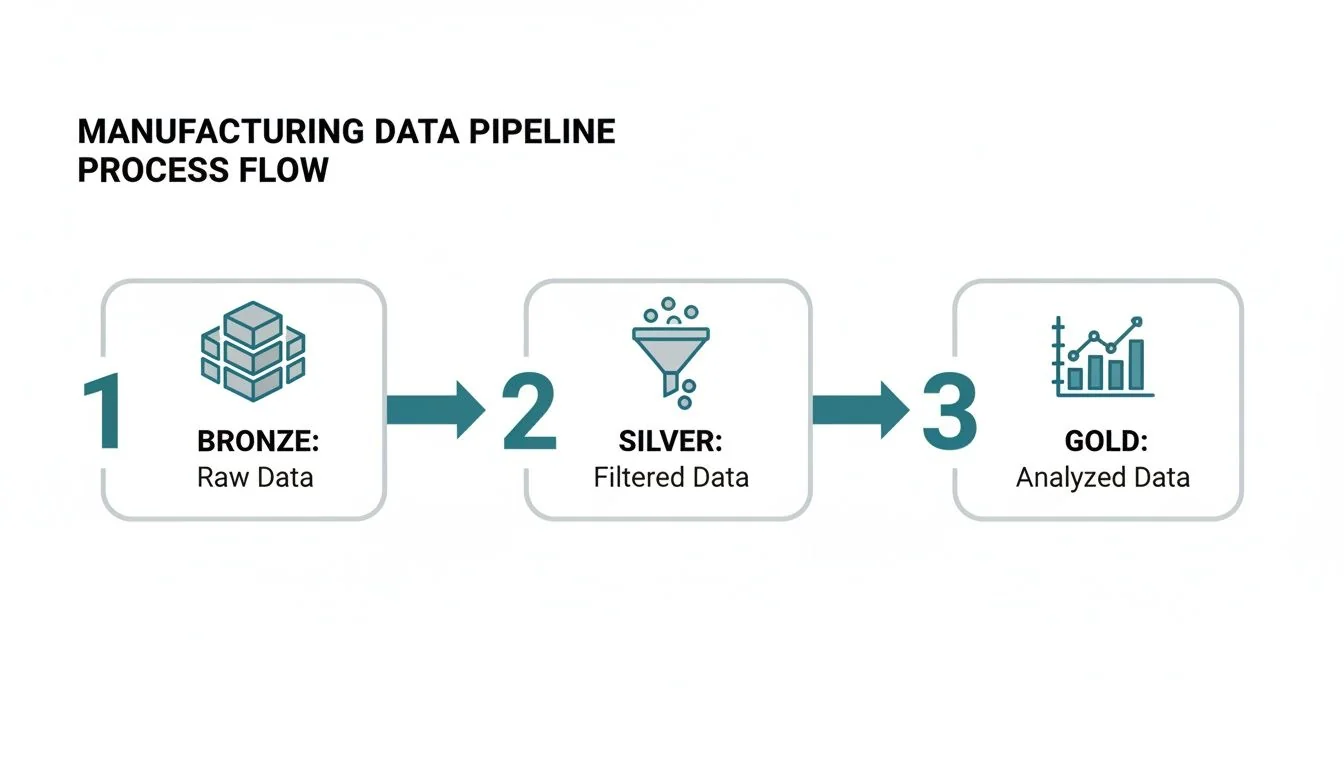

Applying the Medallion Architecture

The Medallion Architecture is a proven pattern for organizing this complex data flow. It methodically refines data through three distinct quality layers, creating a governed and trustworthy path from raw ingestion to analytical insight. It is particularly effective for building complex manufacturing data engineering solutions.

The Medallion Architecture acts as a refinery for factory data. It systematically cleans and structures raw inputs (Bronze), enriches them with business context (Silver), and aggregates them into high-value analytical assets (Gold).

This layered model imposes necessary order on factory data.

- Bronze Layer (Raw): This is the data receiving dock. All incoming data from sensors, MES, and SCADA systems lands here in its original, immutable format. The priority is to capture everything, creating a complete, auditable archive of what happened on the factory floor.

- Silver Layer (Cleansed & Conformed): Here, data from the Bronze layer is validated, scrubbed, and standardized. Outlier sensor readings are filtered, cryptic machine codes are mapped to standard equipment names, and all timestamps are converted to a uniform time zone.

- Gold Layer (Aggregated): This is the final, business-ready product for analysts and data scientists. Data is aggregated and shaped for specific use cases, such as tables for calculating Overall Equipment Effectiveness (OEE) or feature-rich datasets for training machine learning models.

Successfully moving data through these layers requires a solid grasp of core principles of data pipeline ETL. For a deeper dive into these patterns, explore our complete guide to modern data pipeline architecture. This structured approach ensures data quality and governance are embedded from the start, transforming raw sensor signals into strategic assets.

Choosing the Right Cloud Data Platform for Your Factory

Selecting a cloud data platform is the most critical technical decision in a data modernization initiative. This choice dictates the architecture, total cost of ownership (TCO), and ultimate capabilities of the system. For most manufacturing firms, the decision comes down to two platforms: Snowflake and Databricks.

This is not a feature-for-feature comparison but a strategic alignment of a platform’s design philosophy with specific business requirements. A mismatch results in high TCO, significant rework, and a system that fails to meet real-world factory floor demands.

Snowflake: The Powerhouse for BI and Governed Reporting

Snowflake is a cloud-native enterprise data warehouse architected for simplicity, fast query performance, and strong data governance. Its separation of storage and compute allows concurrent workloads without performance degradation—finance can run massive reports while engineers analyze production data.

This makes it the superior choice for enterprise-wide business intelligence (BI) and standardized reporting.

- Where It Shines: Snowflake excels at analyzing structured and semi-structured data at scale. For business analysts and managers who need fast, reliable dashboards for tracking OEE, analyzing inventory, or running financial reports, its SQL-first environment is unmatched.

- Typical Use Case: Consolidating data from disparate ERP, MES, and SCM systems into a central source of truth. Its secure data sharing capabilities are best-in-class for collaborating with suppliers and logistics partners without compromising data security.

Snowflake’s core value proposition is operational simplicity. It automates most of the backend tuning, scaling, and maintenance, freeing data teams to focus on generating insights rather than managing infrastructure.

Databricks: The Platform for Advanced Analytics and MLOps

Databricks, built by the creators of Apache Spark, is a data lakehouse platform designed for large-scale data science and machine learning. It unifies data, analytics, and AI models on a single platform, making it the go-to choice for manufacturers tackling complex, real-time challenges.

The Medallion Architecture is standard practice on Databricks, providing a framework to progressively refine raw data for analytics and machine learning.

This Bronze (raw), Silver (cleansed), and Gold (aggregated) flow is essential for converting messy operational data into a high-value asset ready for MLOps.

- Where It Shines: Databricks offers native support for streaming data and the end-to-end MLOps lifecycle. If the primary business drivers are predictive maintenance, AI-driven quality control with computer vision, or real-time production process optimization, this is the correct platform. It provides a seamless path from raw data ingestion to a deployed production model.

- Typical Use Case: Ingesting high-speed sensor data from thousands of IoT devices on an assembly line to detect anomalies in real time. Its collaborative notebooks and MLflow integration streamline the entire lifecycle of building, training, and managing predictive models.

Snowflake vs. Databricks for Manufacturing Workloads

This table breaks down the key differences to align your manufacturing use cases with the right platform.

| Criterion | Snowflake | Databricks | Best For |

|---|---|---|---|

| Primary Workload | Business Intelligence (BI), SQL Analytics, Reporting | Machine Learning, AI, Real-Time Data Streaming | Snowflake: Reporting-heavy organizations. Databricks: ML-driven factories. |

| Core Architecture | Cloud Data Warehouse (separate storage & compute) | Data Lakehouse (unifies data, analytics, and AI on a data lake) | Snowflake: Simplicity and performance for structured data. Databricks: Flexibility for all data types, especially unstructured. |

| Data Science & ML | Good support via integrations (Snowpark, partner tools) | Native, fully integrated MLOps lifecycle (MLflow) | Databricks has a clear advantage for teams building and deploying models at scale. |

| Data Ingestion | Excellent for batch loading (e.g., nightly ERP data) via Snowpipe | Superior for real-time streaming from IoT, sensors, and PLCs | Databricks is built for the high-velocity data common in modern manufacturing. |

| Ease of Use | Very easy for SQL users and BI analysts; low admin overhead | Steeper learning curve; requires Spark/Python knowledge | Snowflake is faster to adopt for traditional data teams. |

| Governance | Mature, role-based access control and data security | Robust governance with Unity Catalog, which is rapidly maturing | Snowflake has a longer track record of enterprise-grade governance. |

The decision hinges on your organization’s center of gravity. If the primary objective is to serve enterprise-wide BI and governed reporting, choose Snowflake. If the goal is to build a factory of the future driven by real-time analytics and predictive machine learning, Databricks is the definitive answer. Making this choice correctly is the most critical step toward building a successful manufacturing data engineering solution.

Driving ROI With Critical Manufacturing Use Cases

A sophisticated data architecture is an expense until it improves the bottom line. For manufacturing data engineering solutions, value is realized by targeting specific, high-impact problems on the factory floor. There are many proven Solutions for Manufacturing that deliver this tangible return.

Successful implementations begin with a few critical use cases where data can deliver an immediate financial impact. These wins serve as powerful proofs-of-concept, building the momentum and business case for wider rollouts.

Predictive Maintenance

This is often the highest-value starting point. By analyzing real-time sensor data—vibration, temperature, and acoustic patterns—you predict equipment failures before they happen. This shifts maintenance from a reactive, costly fire drill to a proactive, scheduled activity during planned downtime.

- Data Requirements: High-frequency time-series data from PLCs and IoT sensors.

- KPIs for Success: Reduced unplanned downtime, higher Overall Equipment Effectiveness (OEE), and a sharp drop in emergency maintenance costs.

AI-Powered Quality Control

Manual inspections are slow, expensive, and inconsistent. Computer vision models deployed on the production line spot product defects with a speed and accuracy that humans cannot match. This requires a data pipeline capable of handling high-resolution image or video streams in real time. We explore this further in our guide on analytics for manufacturers.

- Data Requirements: Image and video feeds from line-side cameras, combined with other sensor data.

- KPIs for Success: Lower scrap and rework rates, fewer customer returns, and faster root cause analysis of quality issues.

Supply Chain Optimization

A factory operates within a larger ecosystem. By integrating data from your ERP, Manufacturing Execution System (MES), and logistics partners, you build a complete, end-to-end view of your supply chain. This unified view enables better demand forecasting and smarter inventory management, helping you avoid both stockouts and costly overproduction.

By focusing on these tangible use cases, you anchor your data engineering project in clear business value. The goal isn’t just to build pipelines; it’s to reduce downtime, cut waste, and make smarter operational decisions.

Vetting and Selecting a Data Engineering Consulting Partner

Choosing a data engineering partner is as critical as selecting your cloud platform. The wrong choice leads to delays, cost overruns, and a brittle, unmanageable system that becomes a source of technical debt, stifling future innovation. You need a firm with proven expertise on the factory floor, not just a familiarity with cloud services.

Can They Handle the Shop Floor? Vetting Technical and Domain Expertise

Your first filter is direct: do they understand manufacturing? A generalist data firm may excel at e-commerce analytics but will fail when confronted with the complexities of operational technology (OT) data. Probe for specific, non-negotiable skills.

- Fluency in OT Protocols: Have they implemented projects using MQTT, OPC-UA, and Modbus? Ask for concrete examples of projects where they ingested data directly from PLCs, historians, or SCADA systems.

- Deep Platform Knowledge: A partner requires certified expertise in your chosen stack—whether AWS, Azure, or GCP, paired with Snowflake or Databricks. Vague claims of “cloud knowledge” are a red flag. They must understand the nuances of implementing these platforms for manufacturing workloads.

- Real-World Edge Experience: Can they design and deploy solutions at the network edge? This is crucial for real-time processing. Ask about their experience with tools like AWS Greengrass or Azure IoT Edge to process data closer to the source machinery.

According to DataEngineeringCompanies.com’s analysis of 86 data engineering firms, only 28% list OT protocol experience as a core competency. This highlights the rarity of this expertise and the importance of rigorous screening.

How They Build Matters as Much as What They Build

Technical skill is insufficient. A partner who cannot manage projects, communicate clearly, or implement proper security is a liability. Scrutinize their entire delivery process, including project management style, collaboration methods, and data governance frameworks. A strong partner acts as an extension of your team, augmenting your capabilities without creating long-term dependency.

Manufacturing Partner Evaluation Checklist

Use this checklist to structure your vendor conversations and score potential partners objectively. Demand specific, verifiable proof for each item.

| Category | Evaluation Criterion | What to Ask For |

|---|---|---|

| Technical Skills | Verifiable experience with IoT protocols (MQTT, OPC-UA). | ”Show us a project where you ingested OPC-UA data into Databricks.” |

| Deep expertise in your target cloud and data platform (Snowflake or Databricks). | ”Provide anonymized case studies of platform rollouts in a factory setting.” | |

| Experience with both streaming (Kafka, Flink) and batch architectures. | ”Walk us through your reference architecture for a predictive maintenance use case.” | |

| Project Delivery | A clear, agile project management methodology. | ”How do you manage sprints? Show us your planning and review process.” |

| Transparent communication and reporting protocols. | ”What tools do you use for project management to track budget and timelines?” | |

| Industry Focus | A portfolio of successful manufacturing projects. | ”We need to see at least three relevant case studies with hard metrics, such as OEE improvement or scrap reduction.” |

| Governance & Support | Experience implementing data governance frameworks (e.g., Unity Catalog). | ”How do you ensure data quality and lineage from sensor to dashboard?” |

| A solid plan for knowledge transfer and training. | ”What does your support model look like post-launch, and how do you enable our team?” |

Budgeting Your Data Modernization Initiative

Securing budget approval requires a business case framed in financial terms. A credible budget breaks down costs into clear, defensible line items, demonstrating thorough planning. Costs fall into three main categories: the data platform, underlying cloud services, and consulting expertise.

What You’re Actually Paying For

Platform costs are consumption-based. On Snowflake, you pay for compute time via Snowflake credits. On Databricks, the unit is Databricks Units (DBUs). As your teams query, transform, or model more data, consumption increases.

Cloud infrastructure costs are paid directly to your cloud provider (AWS, Azure, or GCP) for the resources your data platform runs on.

- Storage: The cost to store raw (Bronze), cleansed (Silver), and aggregated (Gold) data in cloud storage like Amazon S3 or Azure Blob Storage. This is typically the cheapest component but grows with data volume.

- Compute: The virtual machines that power data pipelines and execute queries for analysts and data scientists.

- Networking: Egress fees—charges for moving data out of a cloud or between regions—can become a significant and unexpected expense if not monitored closely.

Finally, consulting fees cover the specialized expertise to design, build, and deploy the solution correctly. Based on DataEngineeringCompanies.com’s analysis of 86 data engineering firms, a typical pilot project for a mid-sized manufacturer ranges from $75,000 to $200,000. A full-scale, enterprise-wide implementation can cost $500,000 or more.

Assembling the Right Implementation Team

Successful projects depend on a blended team that combines the institutional knowledge of your internal staff with the specialized skills of external experts. This model accelerates project delivery and ensures your team can operate and maintain the system post-engagement.

A well-structured blended team has clear roles:

Your In-House Experts:

- Domain Experts: Plant managers and process engineers who understand the real-world context behind the data.

- IT/OT Staff: The managers of existing factory systems and networks who hold the keys to data access.

Your External Partners:

- Data Architects: The designers of the end-to-end data architecture.

- Data & ML Engineers: The builders who construct data pipelines, write transformation logic, and deploy machine learning models.

- Platform Specialists: Subject matter experts in Snowflake or Databricks who optimize performance and control costs.

This model ensures the final solution is not just technically sound but a practical tool that solves real business problems, providing the financial clarity and operational confidence needed to secure executive buy-in.

Selecting the right partner to guide your initiative is a critical first step. At DataEngineeringCompanies.com, we provide data-driven rankings and tools to help you vet and select the ideal data engineering consultancy for your manufacturing needs. Start your search with confidence today.

Data-driven market researcher with 20+ years in market research and 10+ years helping software agencies and IT organizations make evidence-based decisions. Former market research analyst at Aviva Investors and Credit Suisse.

Previously: Aviva Investors · Credit Suisse · Brainhub · 100Signals

Top Data Engineering Partners

Vetted experts who can help you implement what you just read.