A C-Level Guide to Healthcare Data Engineering & HIPAA Compliance

Building healthcare data pipelines is no longer a game of speed. It’s a game of trust, security, and auditable compliance. For engineering leaders—CTOs, VPs of Engineering, and Heads of Data—ensuring that your data engineering practices are fully HIPAA compliant is non-negotiable. This is especially true when working with modern platforms like Snowflake or Databricks. Security cannot be an afterthought; it must be designed into the architecture from day one.

The Financial and Reputational Cost of Non-Compliance

The cost of failing to secure protected health information (PHI) is astronomical. A data breach now costs a healthcare organization an average of $10.93 million per incident. That is a number that commands the attention of every CTO and Enterprise Architect.

The problem is escalating. In 2023 alone, the healthcare sector saw a record number of breaches, exposing the PHI of over 133 million individuals. Healthcare providers were the primary target, accounting for the vast majority of these incidents. This data proves that legacy data engineering pipelines possess critical vulnerabilities that must be addressed immediately.

This guide translates HIPAA’s core principles into concrete, actionable engineering requirements for modern cloud data platforms on AWS, Azure, and GCP. The goal is to provide a blueprint for building a data infrastructure that is both defensible by design and fully auditable, ensuring robust HIPAA compliance.

From Regulation to Architectural Mandate

HIPAA’s safeguards—Administrative, Physical, and Technical—are not abstract rules. They are direct mandates for how you design and operate your data architecture. This table maps these regulations to the specific controls you must implement in your data engineering environment.

HIPAA Safeguards Mapped to Data Engineering Controls

| HIPAA Safeguard | Core Requirement | Data Engineering Implementation |

|---|---|---|

| Administrative | Implement policies, procedures, and workforce management to protect PHI. | Require mandatory security training for the entire data team and execute Business Associate Agreements (BAAs) with all cloud and third-party data service vendors. |

| Physical | Secure physical locations and hardware where PHI is stored. | Configure only HIPAA-eligible services within your cloud provider’s secure data centers, effectively outsourcing physical security to a compliant partner. |

| Technical | Implement technology-based controls to protect and monitor data access. | Use granular role-based access control (RBAC) in Snowflake/Databricks, enforce end-to-end encryption (TLS, TDE), and configure immutable audit logs in AWS CloudTrail or Azure Monitor. |

Let’s dissect these implementations:

- Administrative Safeguards: This is your operational rulebook. It means conducting formal risk assessments on data pipelines, holding a signed Business Associate Agreement (BAA) with every single vendor that could potentially access PHI (including your cloud provider and SaaS tools), and ensuring your team undergoes regular security training.

- Physical Safeguards: In a cloud-native architecture, you are not managing servers. Your responsibility is selecting a cloud provider—like Amazon Web Services (AWS) or Microsoft Azure—that does. Your role is to architect your solution using only their HIPAA-eligible services and configure them according to security best practices.

- Technical Safeguards: This is the core of healthcare data engineering. It involves the hands-on implementation of strict access controls (IAM and RBAC), the encryption of all data in transit and at rest, and the maintenance of immutable audit logs that record every single instance of PHI access.

Successful HIPAA compliance is not achieved by checking boxes after a platform is built. It is achieved by embedding these safeguards into the architecture from the very first line of code.

Architecting a Defensible and Auditable Data Platform

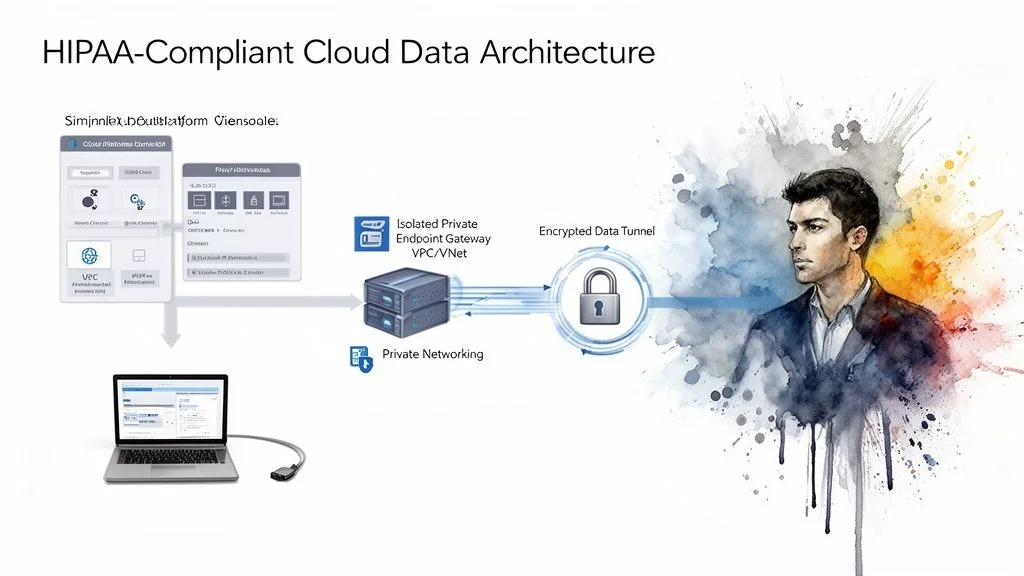

A HIPAA-compliant architecture is secure by design, beginning with absolute network isolation. Any system processing PHI must operate within a digital fortress, completely segregated from the public internet.

This starts with network segmentation. In AWS, this means architecting within a Virtual Private Cloud (VPC). On Azure, it’s a Virtual Network (VNet). The objective is identical: create a private, isolated segment of the cloud to run your data pipelines, platforms like Snowflake or Databricks, and analytics workloads, shielding them from external threats.

Isolate PHI Traffic with Private Endpoints

In a secure healthcare architecture, public IP addresses are a critical vulnerability. All service-to-service communication must route through private endpoints, such as AWS PrivateLink or Azure Private Link. These services create a secure, private tunnel for data traffic, ensuring it never traverses the public internet.

For example, if you use an ingestion tool like Fivetran or Airbyte, its connector must run inside your VPC or VNet and communicate with your data platform over these private channels. Containing the entire data flow within this private network drastically reduces the attack surface.

This is a non-negotiable architectural principle. If an attacker cannot reach your resources from the public internet, an entire class of threat vectors is eliminated. This is the foundation of a HIPAA-compliant data platform.

Enforce Security Policy with Infrastructure as Code

Manual configuration of complex network rules, firewalls, and permissions is a direct route to security gaps caused by human error. Infrastructure as Code (IaC), using tools like Terraform, is not a best practice; it is an essential control for maintaining HIPAA compliance.

With IaC, you define your entire secure environment—VPCs, subnets, security groups, IAM roles—in declarative code. This approach makes your security posture fundamentally more robust.

- Auditable: Every security configuration is codified and tracked in version control, providing a perfect, immutable record for a HIPAA audit.

- Consistent: IaC guarantees that development, staging, and production environments share the exact same security configuration, eliminating “configuration drift.”

- Automated: You can build automated guardrails into your CI/CD pipeline to block any deployment that attempts to violate security policy, such as assigning a public IP address to a database containing PHI.

According to DataEngineeringCompanies.com’s analysis of 86 data engineering firms, teams that adopt IaC from project inception reduce security-related configuration errors by over 60%. While both Snowflake and Databricks will sign a BAA, you must use their higher-tier editions (e.g., Business Critical for Snowflake) that support these essential private networking and advanced encryption features.

Applying the “Minimum Necessary” Rule: De-Identification and Classification

The HIPAA “minimum necessary” principle dictates that you should only use or expose the minimum amount of Protected Health Information (PHI) required to perform a specific task. For data engineering leaders, this translates to two critical actions: automatically classifying where sensitive data resides and systematically de-identifying it.

First, you must find the PHI. HIPAA defines 18 specific identifiers, from obvious ones like names and Social Security Numbers to more subtle data like device IDs and IP addresses. Manual discovery across terabytes of structured and unstructured data is impossible. You must use automated data classification tools—native to your cloud platform or from a third-party data governance vendor—to scan, identify, and tag sensitive data columns at scale.

Selecting a De-Identification Strategy

Once PHI is tagged, you must decide how to treat it for analytics, reporting, or machine learning workloads. HIPAA provides two approved methods for de-identification, each with distinct trade-offs.

| Method | Approach | Best For | Implementation |

|---|---|---|---|

| Safe Harbor | A prescriptive, rule-based method where all 18 specific identifiers are removed from the dataset. | General analytics and reporting where zero identifiers are needed. It offers a clear, easily auditable path to compliance. | Scripting the removal of tagged columns or using data masking functions within tools like dbt. The process is straightforward to document and defend. |

| Expert Determination | A qualified statistician formally analyzes the dataset and certifies that the risk of re-identifying an individual is “very small.” | Complex use cases like machine learning where quasi-identifiers (e.g., zip codes) are statistically valuable for model performance. | Requires engaging a statistical expert to conduct a formal analysis and produce a written report on re-identification risk. The process is more complex but unlocks more powerful analytical capabilities. |

A Limited Data Set (LDS) offers a middle ground for research or public health use cases. It allows for the retention of key geographic data (city, state, full zip code) and all dates, but requires a formal Data Use Agreement (DUA) between the involved parties.

The choice between Safe Harbor and Expert Determination is a strategic one, not merely technical. Safe Harbor provides faster, more straightforward compliance. Expert Determination unlocks the data’s full value for advanced applications. Document the rationale for your decision; auditors will demand it. These practices are the foundation of a robust data governance framework. Our guide on data governance best practices provides more detailed implementation frameworks.

Implementing Ironclad Access Controls and Auditing

HIPAA’s technical safeguards mandate strict access controls. As a data engineering leader, this requires deep implementation of Identity and Access Management (IAM) and the creation of an irrefutable audit trail. This all enforces the principle of least privilege: users access only the exact PHI necessary for their job function, and nothing more.

In a modern data stack, role-based access control (RBAC) is the primary tool for enforcement. Within platforms like Snowflake or Databricks, broad permissions like SELECT on an entire database are a direct path to a HIPAA violation. You must create granular roles—such as clinical_analyst, billing_auditor, or data_scientist—and grant them precise permissions on specific tables, views, or even at the column level.

Building an Immutable Audit Trail

Strong access controls are meaningless without a complete, tamper-proof log of all activity. Your data platform must capture every interaction with PHI. This is a foundational requirement for compliance.

Your audit logs must definitively answer these questions for any given event:

- Who accessed the data? (User ID)

- What data was accessed? (Table, row, file)

- When did the access occur? (Timestamp)

- What action was performed? (Read, write, update, delete)

Tools like AWS CloudTrail and Azure Monitor, along with native logging in Snowflake and Databricks, are built for this purpose. The critical step is to ship these logs to a separate, immutable storage location, such as a locked-down S3 bucket with object versioning and deletion protection enabled. This configuration makes it impossible for anyone, including a malicious actor with admin credentials, to alter or erase access records.

The sheer volume of breaches highlights a crisis in accountability. From late 2009 to Q1 2024, the HHS OCR portal logged over 6,000 major healthcare data breaches, yet hundreds of investigations remain unresolved. This regulatory backlog is a clear warning to every CTO: you must build your own resilient, auditable platforms because you cannot rely on timely external oversight to identify vulnerabilities. These healthcare data breach trends underscore the scope of the problem.

The Mandatory Access Review Checklist

HIPAA requires periodic access reviews to ensure permissions remain appropriate. Setting permissions is only the first step; you must regularly verify that they are still correct and necessary as roles and responsibilities evolve.

Conduct these reviews quarterly. Here is an actionable checklist for that process:

- Generate a Comprehensive Access Report: Pull a complete list of every user and their assigned roles and permissions from Snowflake, Databricks, and your cloud provider’s IAM.

- Verify Active Employment: Cross-reference the user list with HR records. Immediately revoke access for all former employees. There are no exceptions.

- Review Role Assignments: With input from team managers, verify that each user’s assigned role still aligns with their current job duties.

- Audit Privileged Access: Scrutinize every account with administrative rights. Document the business justification for each privileged account.

- Document the Review: Record the date of the review, the participants, and a log of all changes made. This documentation is your proof of due diligence for an audit.

How to Select and Manage Compliant Data Engineering Consulting Partners

When you engage a data engineering consultancy, you are extending them access to your most sensitive asset. Their security posture becomes a direct extension of your own. Any vulnerability on their part is a direct threat to your organization’s HIPAA compliance. A superficial vetting process is a recipe for a security incident.

DataEngineeringCompanies.com’s analysis of 86 data engineering firms revealed that while many claim healthcare expertise, few can provide concrete evidence of their HIPAA-compliant practices. Your evaluation must cut through marketing claims to verify their actual security posture.

The first, non-negotiable requirement is their immediate willingness to sign a Business Associate Agreement (BAA). Any hesitation, attempt to dilute its terms, or refusal to sign is the clearest possible red flag. Terminate the discussion immediately.

The Vendor Vetting Checklist

When evaluating potential data engineering partners or providers of services like HIPAA Compliant Transcription Services, your RFP and interview process must be rigorous. You must get clear, confident answers to these direct questions:

- BAA Execution: Do you have a standard BAA ready for review, and are your legal and security teams prepared to execute it promptly?

- HIPAA Training Records: Can you provide evidence of regular, mandatory HIPAA security training for every employee who could potentially access our data?

- Secure Development Lifecycle (SDLC): How is security integrated into your engineering process? Demand specifics on code reviews, vulnerability scanning, and secure deployment pipelines.

- Incident Response Protocol: What is your documented incident response plan? Walk us through how you would coordinate with our team in the event of a suspected breach.

- Proven Healthcare Projects: Can you provide anonymized but detailed case studies of HIPAA-compliant data engineering projects you have successfully delivered? What was the architecture?

A partner who answers these questions directly and confidently demonstrates a mature security culture. A partner who provides generic responses is a liability you cannot afford.

Your Action Plan for a Compliant Data Future

HIPAA compliance is not a project to be completed; it is an operational discipline that must be integrated into your daily engineering practices. This roadmap provides a structured path from planning to execution.

The stakes are enormous. Breaches are not just technical failures; they are financial catastrophes. The average breach recovery time is crippling: it takes 277 days to fully identify and contain a breach. The related healthcare cybersecurity statistics driving these costs are staggering. These numbers represent real-world operational disruptions that make robust, compliant data engineering non-negotiable.

This 30-60-90 day plan provides a structured approach for engineering leaders to build a defensible and scalable data infrastructure.

30-60-90 Day HIPAA Compliance Action Plan

| Phase | Key Actions | Primary Goal |

|---|---|---|

| First 30 Days: Foundation & Assessment | • Inventory all systems that store or process PHI. • Map all data flows from ingestion to analytics. • Conduct a formal risk assessment to identify vulnerabilities. | Achieve a complete and accurate understanding of your current risk posture. |

| Next 60 Days: Prioritized Remediation | • Implement granular Role-Based Access Control (RBAC) across all platforms. • Enforce end-to-end encryption for data in-transit and at-rest. • Begin remediating the highest-priority risks identified in the assessment. | Close the most critical security gaps and establish foundational technical controls. |

| First 90 Days: Operationalize & Automate | • Implement automated, continuous logging and monitoring for all PHI access. • Formalize and conduct a tabletop exercise of your incident response plan. • Finalize all necessary documentation for policies, procedures, and architectural decisions. | Transition from a reactive to a proactive, sustainable, and auditable compliance program. |

This framework is designed to build momentum and demonstrate measurable progress, creating a solid foundation for a long-term, defensible compliance program.

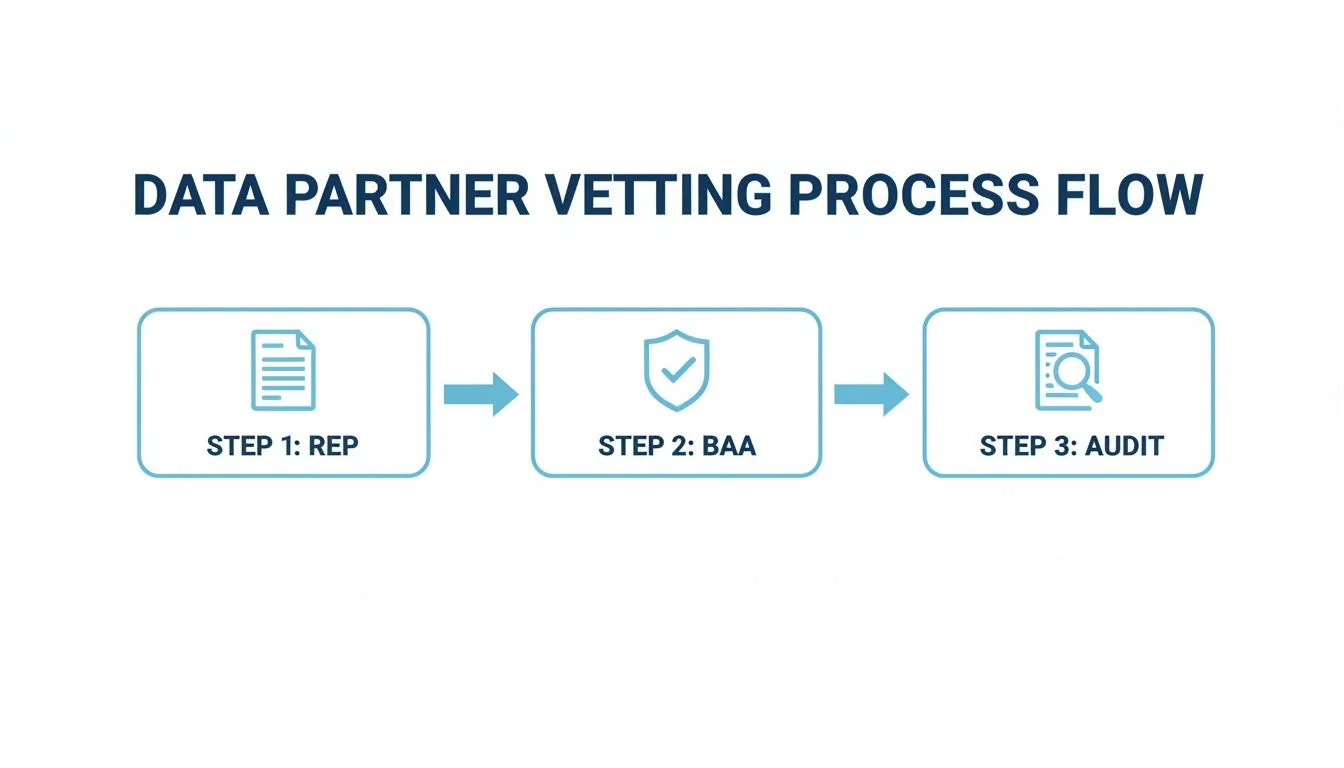

Vetting Your Data Partners

Your compliance strategy is incomplete without a rigorous process for managing third-party risk. Every vendor or partner handling PHI must adhere to the same security standards you do. A simple, repeatable vetting process is essential.

This process—moving from a Request for Proposal (RFP) to a signed Business Associate Agreement (BAA) and then to routine audits—is the foundation of secure vendor management. The BAA is a legal necessity, but it is the ongoing audits and diligent relationship management that provide true protection.

Answering Your Toughest HIPAA Data Questions

When building a healthcare data platform, several key questions consistently arise for engineering leaders. Here are direct answers to the most common ones.

Which Cloud Is Best for HIPAA Compliance: AWS, Azure, or GCP?

This question is a false choice. There is no such thing as a “HIPAA-compliant cloud.” Compliance is not determined by the provider but by your architecture and configuration of their services.

All three major cloud providers will sign a Business Associate Agreement (BAA) and offer the necessary building blocks for security, such as private networking, IAM, and robust encryption. The deciding factor is your team’s existing expertise and the specific managed services you intend to use. If your team has deep expertise in BigQuery, choosing GCP is the logical path. The critical action is to commit to using only their HIPAA-eligible services for any PHI workload and configuring them correctly within your secure network architecture.

Do I Need a BAA with Snowflake or Databricks?

Yes, absolutely. Any vendor that stores, processes, or transmits PHI is defined as a Business Associate under HIPAA. This includes your core data platforms like Snowflake and Databricks.

A Business Associate Agreement (BAA) is a non-negotiable legal requirement. If a potential vendor will not sign a BAA, you cannot use their service for any workload involving protected health information.

You must also subscribe to the correct service tier. Both Snowflake (via its Business Critical edition or higher) and Databricks offer BAAs, but you must be on a plan that supports their required HIPAA-compliant security features, such as private networking.

How Does De-Identification Impact Analytics?

De-identification is a critical tool for reducing compliance scope, but it creates a direct trade-off with analytical depth. Your choice between the two primary methods has significant consequences for your data science and analytics teams.

-

Safe Harbor: This prescriptive method requires removing all 18 defined patient identifiers. It is the most direct route to compliance but can severely limit the ability to perform longitudinal analysis or location-based modeling.

-

Expert Determination: This method provides more flexibility. A statistician certifies that the risk of re-identification is minimal, which allows for the retention of valuable quasi-identifiers. This enables richer machine learning models but requires the overhead of a formal statistical validation process.

The decision is a strategic balance between your organization’s analytical ambitions and its tolerance for risk and operational complexity.

Navigating the technical and legal complexities of HIPAA is a significant challenge for any engineering organization. Choosing the right consulting partner is critical. DataEngineeringCompanies.com provides data-driven rankings of top firms, allowing you to select a partner with demonstrated expertise in building secure, compliant healthcare data platforms. Find your expert partner today.

Data-driven market researcher with 20+ years in market research and 10+ years helping software agencies and IT organizations make evidence-based decisions. Former market research analyst at Aviva Investors and Credit Suisse.

Previously: Aviva Investors · Credit Suisse · Brainhub · 100Signals

Top Data Engineering Partners

Vetted experts who can help you implement what you just read.